00 white vlgray

Maciej Besta 1, Nils Blach 1 †, Ales Kubicek 1, Robert Gerstenberger 1,

Michał Podstawski 2, Lukas Gianinazzi 1, Joanna Gajda 3, Tomasz Lehmann 3,

Hubert Niewiadomski 3, Piotr Nyczyk 3, Torsten Hoefler 1 Equal contribution

Abstract

We introduce Graph of Thoughts (GoT): a framework that advances prompting capabilities in large language models (LLMs) beyond those offered by paradigms such as Chain-of-Thought or Tree of Thoughts (ToT). The key idea and primary advantage of GoT is the ability to model the information generated by an LLM as an arbitrary graph, where units of information (“LLM thoughts”) are vertices, and edges correspond to dependencies between these vertices. This approach enables combining arbitrary LLM thoughts into synergistic outcomes, distilling the essence of whole networks of thoughts, or enhancing thoughts using feedback loops. We illustrate that GoT offers advantages over state of the art on different tasks, for example increasing the quality of sorting by 62% over ToT, while simultaneously reducing costs by 31%. We ensure that GoT is extensible with new thought transformations and thus can be used to spearhead new prompting schemes. This work brings the LLM reasoning closer to human thinking or brain mechanisms such as recurrence, both of which form complex networks.

Website & code: https://github.com/spcl/graph-of-thoughts

1 Introduction

Large language models (LLMs) are taking over the world of AI. Recent years saw a rapid development of models primarily based on the decoder-only Transformer variant 1, such as GPT 2 3 4 5, PaLM 6, or LLaMA 7.

Prompt engineering is a resource-efficient approach for solving different LLM tasks. In brief, one includes the task description within the input sent to an LLM. If this description is appropriately formulated, the LLM solves the task using its autoregressive token-based mechanism for generating text. Such prompts may contain example tasks with solutions (few-shot prompting, also referred to as in-context learning (ICL)), or even no example tasks at all (zero-shot prompting). In recent years it was shown that this mechanism can be used to solve a broad set of tasks that involve mathematical, commonsense, or symbolic reasoning.

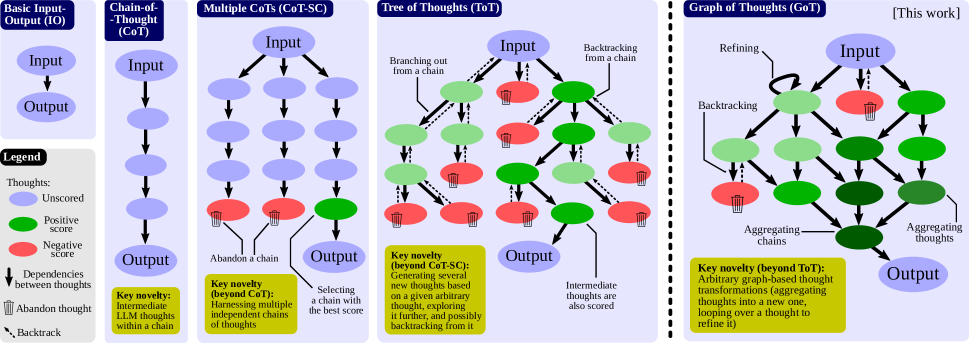

Chain-of-Thought (CoT) 8 is an approach for prompting, in which one includes the intermediate steps of reasoning within the prompt (intermediate “thoughts”), besides the task input/output. CoT was shown to significantly improve the capability of LLMs to solve problems without resorting to any model updates. One major improvement over CoT, Self-Consistency with CoT (CoT-SC) 9, is a scheme where multiple CoTs are generated, and then the best one is selected as the outcome. More recently, CoT and CoT-SC were extended with Tree of Thoughts (ToT) 10 11 12, which models the LLM reasoning process with a tree. This facilitates using different paths of thoughts, and offers novel capabilities such as backtracking from non-promising outcomes. Unfortunately, the ToT approaches still fundamentally limit the reasoning abilities within a prompt by imposing the rigid tree structure on the thought process.

In this work, we argue that fundamentally more powerful prompting can be achieved by enabling LLM thoughts to form an arbitrary graph structure. This is motivated by numerous phenomena such as human reasoning, brain structure, or algorithmic execution. When working on a novel idea, a human would not only follow a chain of thoughts (as in CoT) or try different separate ones (as in ToT), but would actually form a more complex network of thoughts. For example, one could explore a certain chain of reasoning, backtrack and start a new one, then realize that a certain idea from the previous chain could be combined with the currently explored one, and merge them both into a new solution, taking advantage of their strengths and eliminating their weaknesses. Similarly, brains form complex networks, with graph-like patterns such as recurrence 13. Executing algorithms also expose networked patterns, often represented by Directed Acyclic Graphs. The corresponding graph-enabled transformations bring a promise of more powerful prompting when applied to LLM thoughts, but they are not naturally expressible with CoT or ToT.

We observe that these (and many other) thought transformations can be naturally enabled when modeling the reasoning process of an LLM as a graph. For this, we propose Graph of Thoughts (GoT), an approach that enhances LLMs’ capabilities through networked reasoning (contribution #1). In GoT, an LLM thought is modeled as a vertex, while an edge is a dependency between such thoughts. Using GoT, one can aggregate arbitrary thoughts by constructing vertices that have more than one incoming edge. Overall, the graph abstraction harnessed by GoT seamlessly generalizes CoT and ToT to more complex thought patterns, without resorting to any model updates.

Yet, putting GoT to practice requires solving several design challenges. For example, what is the best graph structure for different tasks? How to best aggregate thoughts to maximize accuracy and minimize cost? To answer these and many other questions, we carefully design a modular architecture for implementing GoT (contribution #2), coming with two design highlights. First, we enable a fine-grained control over individual thoughts. This enables us to fully control the ongoing conversation with the LLM, and apply advanced thought transformations, such as combining most promising thoughts from the ongoing reasoning into a new one. Second, we ensure that our architecture can be seamlessly extended with novel thought transformations, patterns of reasoning (i.e., graphs of thoughts), and LLM models. This enables rapid prototyping of novel prompting ideas using GoT, while experimenting with different models such as GPT-3.5, GPT-4, or Llama-2 14.

We illustrate several use cases for GoT (sorting, keyword counting for summaries, set operations, document merging) and we detail how to implement them using the graph-based paradigm (contribution #3). We evaluate GoT and show its advantages over the state of the art (contribution #4). Overall, we observe that GoT is particularly well-suited for tasks that can be naturally decomposed into smaller subtasks that are solved individually and then merged for a final solution. Here, GoT outperforms other schemes, for example improving upon CoT and ToT by, respectively, 70% and 62%, in terms of the quality of sorting, while simultaneously reducing costs by 31% over ToT.

We qualitatively compare GoT to other prompting schemes 1 in Table 1. GoT is the only one to enable arbitrary graph-based thought transformations within a prompt, such as aggregation, embracing all previously proposed schemes.

| Scheme | Sc? | Mc? | Tr? | Ag? |

|---|---|---|---|---|

| Chain-of-Thought (CoT) 8 | ||||

| Self-Consistency with CoT 9 | ||||

| Thought decomposition 12 | ||||

| Tree-of-Thought (ToT) 10 | ||||

| Tree of Thoughts (ToT) 11 | ||||

| Graph of Thoughts (GoT) |

Table 1: Comparison of prompting schemes, with respect to the supported transformations of thoughts. “Sc?”: single chain of thoughts? “Mc?”: multiple chains of thoughts? “Tr?”: tree of thoughts? “Ag?”: arbitrary graph of thoughts? “”: full support, “”: partial support, “”: no support.

Finally, we propose a new metric for evaluating a prompting strategy, the volume of a thought (contribution #5). With this metric, we aim to understand better the differences between prompting schemes. For a given thought , the volume of is the number of LLM thoughts, from which one can reach using directed edges. Intuitively, these are all the LLM thoughts that have had the potential to contribute to . We show that GoT, by incorporating thought transformations such as aggregation, enables thoughts to have fundamentally larger volumes than other schemes.

Figure 1: Comparison of Graph of Thoughts (GoT) to other prompting strategies.

2 Background & Notation

We first outline background concepts and notation.

2.1 Language Models & In-Context Learning

The conversation with the LLM consists of user messages (prompts) and LLM replies (thoughts). We follow the established notation 11 and we denote a pre-trained language model (LM) with parameters as . Lowercase letters such as indicate LLM thoughts. We purposefully do not prescribe what is a single “thought”, and instead make it use-case specific. Hence, a single thought can be a paragraph (e.g., in article summary), a document (e.g., in document generation), a block of code (e.g., in code debugging or optimization), and so on.

We next describe specific prompting approaches.

Input-Output (IO)

The Input-Output (IO) prompting is a straightforward approach, in which we use an LLM to turn an input sequence into the output directly, without any intermediate thoughts.

Chain-of-Thought (CoT)

Second, in Chain-of-Thought (CoT), one introduces intermediate thoughts between and . This strategy was shown to significantly enhance various LM tasks over the plain IO baseline, such as mathematical puzzles 8 or general mathematical reasoning 15.

Multiple CoTs

Third, one can generalize CoT into multiple CoTs by generating several (independent) CoTs, and returning the one with the best output (according to some prescribed scoring metric). It was introduced by Wang et al. in the scheme called Self-Consistency with CoT (CoT-SC) 9. This approach enhances CoT because it offers an opportunity to explore different reasoning paths. However, it does not offer “local exploration” within a path, such as backtracking.

Tree of Thoughts (ToT)

Finally, the Tree of Thoughts (ToT) scheme was introduced independently by Yao 11 and Long 10 (where it is referred to as Tree-of-Thought); it was used implicitly to a certain degree by other schemes such as thought decomposition 12. It enhances CoT-SC by modeling the process or reasoning as a tree of thoughts. A single tree node represents a partial solution. Based on a given node, the thought generator constructs a given number of new nodes. Then, the state evaluator generates scores for each such new node. Depending on the use case, the evaluation could be conducted using an LLM itself, or it can harness human scores. Finally, the schedule of extending the tree is dictated by the utilized search algorithm (for example BFS or DFS).

3 The GoT Framework

We now detail the GoT framework. We present it in Figure 1, and compare it to other prompting strategies.

Formally, GoT can be modeled as a tuple , where is the “LLM reasoning process” (i.e., all the LLM thoughts within the context, with their relationships), are the potential thought transformations, is an evaluator function used to obtain scores of thoughts, and is a ranking function used to select most relevant thoughts.

3.1 Reasoning Process

We model the reasoning process as a directed graph ; is a set of vertices and is a set of edges. is directed and thus the edges are a subset of ordered vertex pairs . A vertex contains a solution to a problem at hand (be it an initial, intermediate, or a final one). The concrete form of such a thought depends on the use case; it could be a paragraph (in writing tasks) or a sequence of numbers (in sorting). A directed edge indicates that thought has been constructed using as “direct input”, i.e., by explicitly instructing the LLM to use for generating .

In certain use cases, graph nodes belong to different classes. For example, in writing tasks, some vertices model plans of writing a paragraph, while other vertices model the actual paragraphs of text. In such cases, GoT embraces a heterogeneous graph to model the LLM reasoning, where maps vertices into their respective classes (in the above case, it would be ). Hence, any vertex can model different aspects of reasoning.

We associate with the LLM reasoning process. To advance this process, one applies thought transformations to . An example of such a transformation is to merge best-scoring (so far) thoughts into a new one. Another example is to loop over a thought, in order to enhance it. Note that these transformations strictly extend the set of transformations available in the CoT, CoT-SC, or ToT.

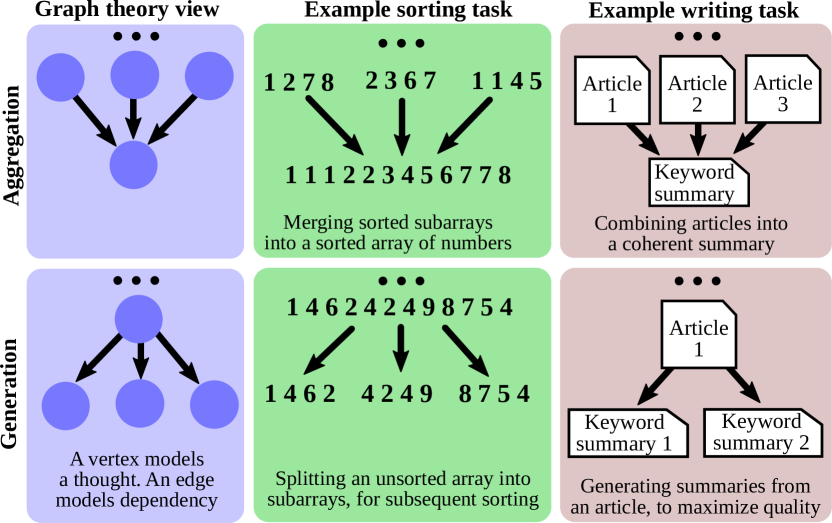

3.2 Transformations of Thoughts

GoT enables novel transformations of thoughts thanks to the graph-based model for reasoning. We refer to them as graph-enabled transformations. For example, in writing, one could combine several input articles into one coherent summary. In sorting, one could merge several sorted subarrays of numbers into a final sorted array. We illustrate examples of aggregation and generation in Figure 2.

Figure 2: Examples of aggregation and generation thought transformations.

Formally, each such transformation can be modeled as where is the graph reflecting the current state of the reasoning, and is the used LLM. modifies usually by adding new vertices and their incoming edges. We have , where and . and are new vertices and edges inserted into to model the new thoughts and their dependencies, respectively. To maximize the expressiveness of GoT – we also enable the user to explicitly remove thoughts, by specifying the corresponding vertices and edges to be removed ( and , respectively). Here, it is the user’s responsibility to ensure that the sets and come with consistent transformations (i.e., for example, that the user does not attempt to remove a vertex that does not exist). This enables seamless incorporation of schemes where, in order to save space within the context, one can remove parts of reasoning that do not promise improvements.

The specific form of and how it impacts depends on a specific transformation. We first detail the primary graph-enabled thought transformations, and then proceed to describe how GoT embraces the transformations from the earlier schemes. Unless stated otherwise, .

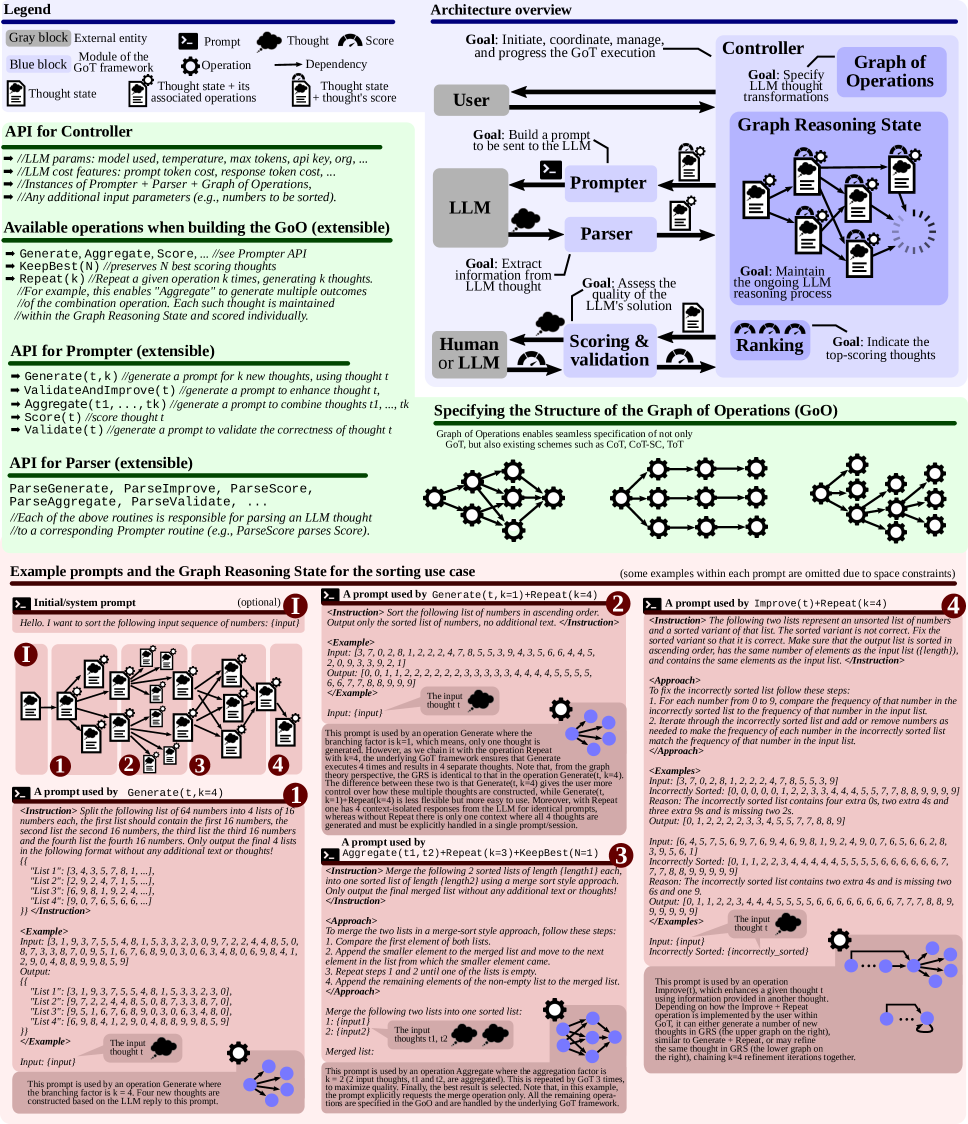

Figure 3: The system architecture of GoT, and the APIs of respective modules. The user can straightforwardly extend the design towards new prompting schemes, experiment with novel thought transformations, and plug in different LLMs. The blue part of the figure contains the architecture overview, the green part lists the API, and the red part contains example prompts together with a GRS and operations involved.

Aggregation Transformations

First, with GoT, one can aggregate arbitrary thoughts into new ones, to combine and reinforce the advantages of these thoughts, while eliminating their disadvantages. In the basic form, in which only one new vertex is created, and , where are the merged thoughts. More generally, this enables aggregating reasoning paths, i.e., longer chains of thoughts, beyond just individual thoughts. With the graph model, it is simply achieved by adding outgoing edges from the vertices , modeling final thoughts in several chains, into a single thought combining these chains.

Refining Transformations

Another thought transformation is the refining of a current thought by modifying its content: and . This loop in the graph indicates an iterated thought with the same connections as the original thought.

Generation Transformations

Finally, one can generate one or more new thoughts based on an existing single thought . This class embraces analogous reasoning steps from earlier schemes, such as ToT or CoT-SC. Formally, we have and .

3.3 Scoring & Ranking Thoughts

Thoughts are scored to understand whether the current solution is good enough. A score is modeled as a general function , where is a thought to be evaluated. We use the state of the whole reasoning process () in for maximum generality, because – for example – in some evaluation scenarios, scores may be relative to other thoughts.

GoT can also rank thoughts. We model this with a function where specifies the number of highest-ranking thoughts in to be returned by . While the specific form of depends on the use case, we most often use a simple yet effective strategy where thoughts with the highest scores are returned, i.e., .

Specific forms of and depend on the use case. We discuss the details in Section 5. For example, the score (or rank) for sorting corresponds to the count of elements correctly sorted (or incorrectly, when using the error as a score).

4 System Architecture & Extensibility

The GoT architecture consists of a set of interacting modules, see Figure 3 (the blue part). These modules are the Prompter (prepares the messages for the LLM), the Parser (extracts information from LLM thoughts), the Scoring module (verifies and scores the LLM thoughts), and the Controller (coordinates the entire reasoning process, and decides on how to progress it). The Controller contains two further important elements: the Graph of Operations (GoO) and the Graph Reasoning State (GRS). GoO is a static structure that specifies the graph decomposition of a given task, i.e., it prescribes transformations to be applied to LLM thoughts, together with their order & dependencies. GRS is a dynamic structure that maintains the state of the ongoing LLM reasoning process (the history of its thoughts and their states).

4.1 Prompter

The Prompter prepares the prompts to be sent to the LLM. This module is responsible for the specifics of encoding the graph structure within the prompt. The GoT architecture enables the user to implement use case specific graph encodings by providing full access to the graph structure.

4.2 Parser

The Parser extracts information from LLM thoughts. For each such thought, the Parser constructs the thought state, which contains this extracted information. The thought state is then used to update the GRS accordingly.

4.3 Scoring & Validation

Here, we verify whether a given LLM thought satisfies potential correctness conditions, and then we assign it a score. Depending on how the score is derived, the module may consult the LLM. Moreover, depending on the use case, the score may also be assigned by a human. Finally, use cases such as sorting use simple local scoring functions.

4.4 Controller

The Controller implements a specific strategy for selecting thoughts from its GRS structure. It also selects what transformations should be applied to which thoughts, and then passes this information to the Prompter. It also decides whether the whole process should be finalized, or whether the next round of interaction with the LLM should be initiated. In our current design, this is dictated by the execution plan specified in the GoO.

4.5 GoO & GRS

The user constructs a GoO instance, which prescribes the execution plan of thought operations. The GoO is a static structure that is constructed once, before the execution starts. Each operation object knows its predecessor and successor operations. Then, during the execution, an instance of the GRS maintains the continually updated information about the LLM reasoning process. This includes which operation has been executed so far, the states of all the generated LLM thoughts, their validity and scores, and any other relevant information.

The above elements offer extensible APIs, enabling straightforward implementations of different prompting schemes. The APIs are outlines in the green part of Figure 3, and detailed in the documentation. We also provide examples of prompts used by these operations and a corresponding GRS in the red part of Figure 3.

5 Example Use Cases

We now describe several use cases of GoT. We detail one use case (sorting) and summarize the others.

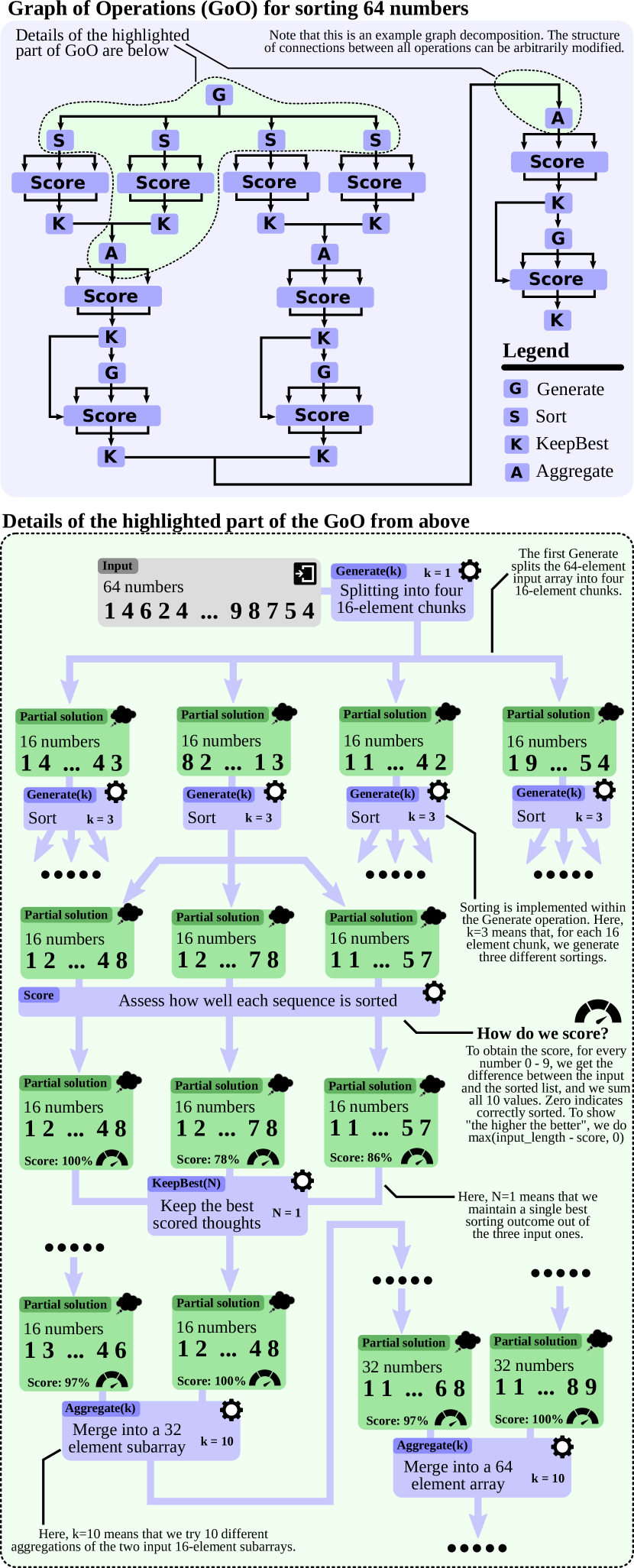

5.1 Sorting

We focus on the decomposition of the sorting use case and Graph of Operations, which are central for implementing and executing any workload within GoT.

We consider sorting numbers 0–9 with duplicates. The considered LLMs are unable to sort a sequence of such numbers correctly beyond a certain length consistently because duplicate counts do not match.

In GoT, we employ merge-based sorting: First, one decomposes the input sequence of numbers into subarrays. Then, one sorts these subarrays individually, and then respectively merges them into a final solution. Figure 4 illustrates this use case together with its graph decomposition. Here, an LLM thought is a sequence of sorted numbers.

To score an outcome, denote an input sequence with and an output one with . We use the following score that determines “the scope” of errors:

where , , and

Here, indicates how many consecutive pairs of numbers are incorrectly sorted. If two numbers and are incorrectly sorted (i.e., ), then the expression within the summation returns 1, increasing the error score by one. For two numbers correctly sorted, this expression amounts to 0. Then, determines how well a given output sequence preserves the frequency of output numbers. Specifically, for each considered number (), we obtain the difference between the count of input elements being equal to , vs. the count of output elements equal to . For an output sequence perfectly preserving the frequency of , this would amount to 0. Any single “deviation” in this count, increases the “error scope” by 1. We then sum this over all considered values of . When plotting this score, to improve the clarity of plots, we additionally apply clipping , as some baselines (IO, CoT) result in large numbers of outliers with high error scope. Finally, to use a “positive score” describing “the scope of correctly sorted” elements, one can use the value .

Figure 4: An example graph decomposition of the sorting use case in GoT. All used operations (Generate, Aggregate, Score, KeepBest) are described in Figure 3.

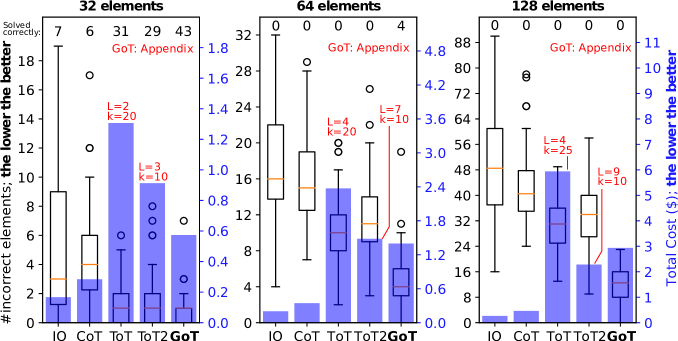

5.2 Set Operations

Moreover, we also consider set operations, focusing on set intersection. They have numerous applications (particularly set intersection) in problems ranging from genome or document comparisons to pattern matching 16 17 18 19 20 21 22 23. Set intersection of two sets is implemented similarly as the sorting. The second input set is split into subsets and the intersection of those subsets with the first input set is determined with the help of the LLM. Afterwards the resulting intersection sets are aggregated for the final results. For the evaluation we use different set sizes of 32, 64 and 128 elements and we vary the number of elements found in both sets to be between 25% and 75%.

Our score indicates the total number of missing or incorrectly included elements in the final intersection. Specifically, denote two input sets with and , and the output set with . Then,

where are the number of elements in that are not supposed to be there, are the number of elements missing from , and is the number of duplicates in (because the LLM expresses the set as a list in natural language). Finally, to use a “positive score” describing “the scope of correctly computed” elements, one can use the value .

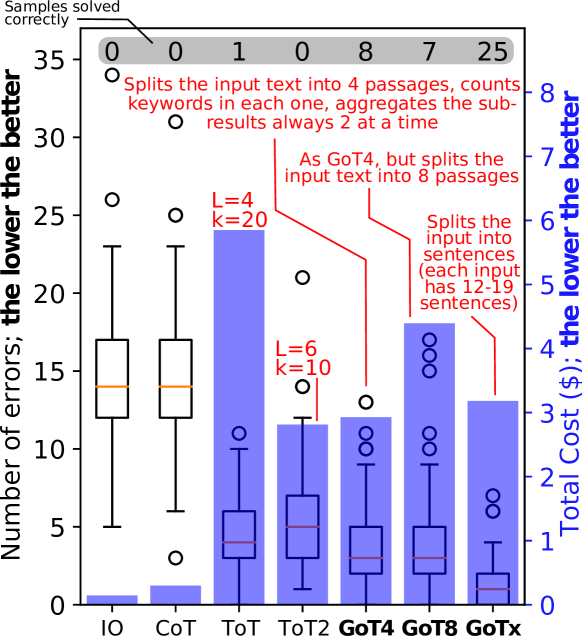

5.3 Keyword Counting

Keyword counting finds the frequency of keywords in a given category (countries in our example implementation) within the input text. GoT splits the input text into multiple passages, counts the keywords in each passage and aggregates the subresults. The number of passages is configurable and can also be left to the LLM, making it possible to treat each sentence as a separate passage. Here, to score a thought, we first – for each keyword – derive the absolute difference between the computed count and the correct one. We then sum all these differences to get the final score.

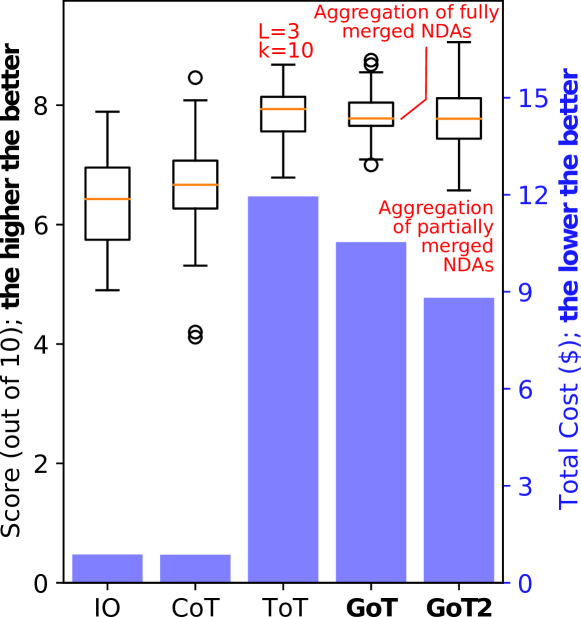

5.4 Document Merging

Finally, we also provide document merging. Here, the goal is to generate a new Non-Disclosure Agreement (NDA) document based on several input ones that partially overlap in terms of their contents. The goal is to ensure minimal amount of duplication, while maximizing information retention. Document merging is broadly applicable in, e.g., legal procedures, where multiple sources of information have to be combined into a single document or article. To score a solution, we query the LLM for two values (3 times for each value, and take the average). The first value corresponds to the solution redundancy (10 indicates no redundancy, 0 implies at least half the information is redundant), the second value stands for information retention (10 indicates all information is retained, 0 says that none is retained). We compute the harmonic mean of these values.

6 The Latency-Volume Tradeoff

We now show that GoT improves upon previous prompting schemes in terms of the tradeoff between latency (number of hops in the graph of thoughts to reach a given final thought) and volume. We define volume – for a given thought – as the number of preceding LLM thoughts that could have impacted . Formally, the volume of is the number of thoughts from which there exists a path to in the graph of thoughts. We assume that outputting a single thought costs time and fix the total cost to for each prompting scheme.

The structure of the schemes is as follows. CoT-SC consists of independent chains originating from a single starting thought. ToT is a complete -ary tree. Finally, in GoT, a complete -ary tree is joined at its leaves with a “mirrored” -ary tree of the same size but with its edges reversed.

The analysis is detailed in Table 2. CoT offers a large volume of up to , but at the cost of a high latency of . CoT-SC reduces the latency by a factor of (which corresponds to its branching factor), but it simultaneously decreases the volume by as well. ToT offers a latency of but also has low volume. GoT is the only scheme to come with both a low latency of and a high volume . This is enabled by the fact that GoT harnesses aggregations of thoughts, making it possible to reach the final thought from any other intermediate thought in the graph decomposition.

| Scheme | Latency | Volume |

|---|---|---|

| Chain-of-Thought (CoT) | ||

| Self-Consistency with CoT (CoT-SC) | ||

| Tree of Thoughts (ToT) | ||

| Graph of Thoughts (GoT) |

Table 2: Comparison of prompting schemes, with respect to their fundamental tradeoff between latency and volume. GoT offers the best tradeoff.

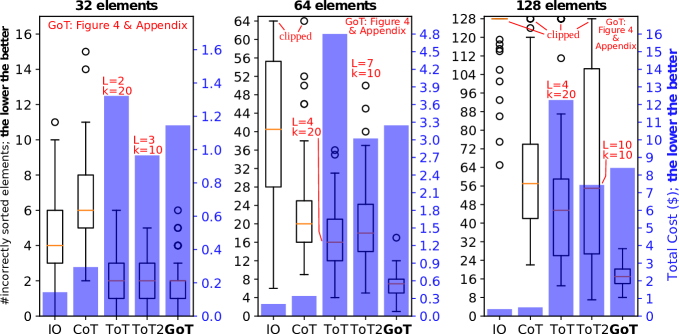

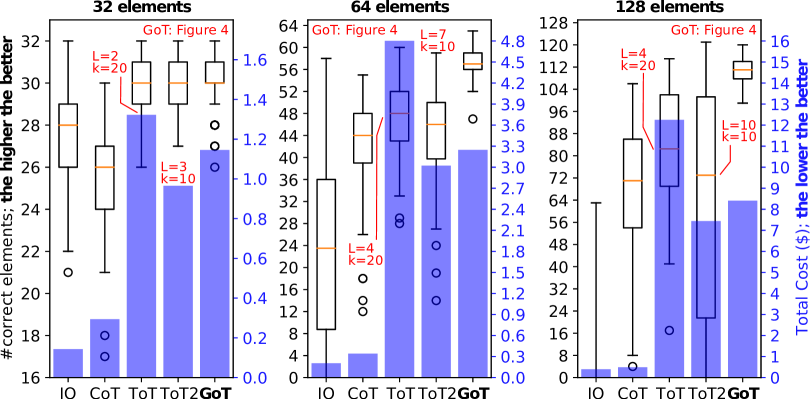

Figure 5: Number of errors and cost in sorting tasks with ChatGPT-3.5. L 𝐿 and k 𝑘 indicate the structure of ToT (see Sections 3.2 6 ).

7 Evaluation

We show the advantages of GoT over the state of the art. We focus on comparing GoT to ToT, as it was shown to consistently outperform other schemes. Still, for a broad comparison, we also experiment with IO, CoT, and CoT-SC. As our analysis results in a large evaluation space, we present representative results and omit data that does not bring relevant insights (e.g., CoT-SC).

7.1 Evaluation Methodology

We use 100 input samples for each task and comparison baseline. We set the temperature to 1.0 and use a 4k context size unless stated otherwise. For each experiment, we fix the numbers of thoughts in respective schemes to achieve similar costs in each experiment.

Parameters We experiment extensively with the branching factor and the number of levels to ensure that we compare GoT to cost-effective and advantageous configurations. We plot two variants of ToT: one with higher and lower depth (ToT), the other with lower but higher (ToT2). We usually aim to achieve a sweet spot in the tradeoff between sparser generation rounds (lower ) vs. more rounds (larger ). Usually more responses per round is more expensive (e.g., 80 vs. 60 total responses for Figure 7 but 3 costs). We also try different problem sizes (e.g., in sorting, states how many numbers are to be sorted).

Used LLMs Due to budget restrictions, we focus on GPT-3.5. We also experimented with Llama-2, but it was usually worse than GPT-3.5 and also much slower to run, making it infeasible to obtain enough samples.

7.2 Analysis of GoT’s Advantages

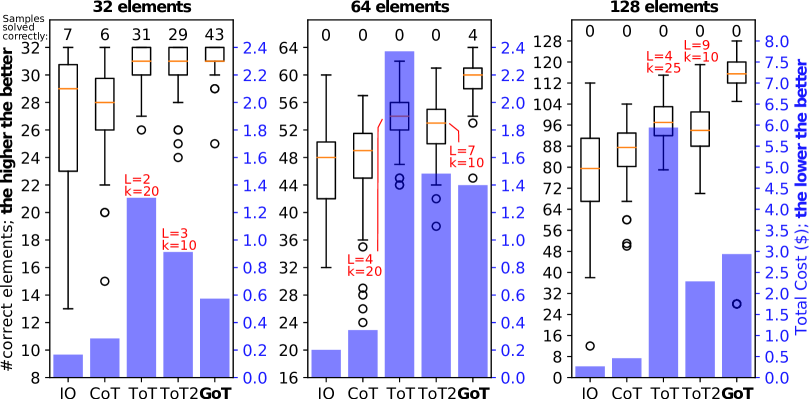

The results of the analysis are in Figure 5 (sorting), 6 (set intersection), 7 (keyword counting), and 8 (document merging); see Section 5 for the description of specific use cases. Overall, GoT improves the quality of outcomes over all the considered baselines and it reduces inference costs compared to ToT.

Figure 6: Number of errors and cost in set intersection tasks with ChatGPT-3.5. L 𝐿 and k 𝑘 indicate the structure of ToT (see Sections 3.2 6 ).

GoT vs. ToT GoT improves upon ToT and ToT2 by a large margin over all the considered problem instances. ToT usually comes with somewhat higher quality than ToT2, but simultaneously much higher costs. GoT’s costs are always lower than ToT, and comparable (in some cases lower, in others higher) to ToT2. For example, it reduces median error by 62%, thereby achieving a higher quality of sorting, for in comparison to ToT while ensuring 31% cost reductions. These advantages are due to GoT’s ability to decompose complex tasks into simpler subtasks, solve these subtasks independently, and then incrementally merge these outcomes into the final result.

GoT vs. IO and CoT GoT consistently delivers much higher quality of outcomes than IO/CoT. For example, for sorting (), GoT’s median error is 65% and 83% lower than, respectively, CoT and IO. Yet, the costs of GoT – and ToT – are much higher than in IO and CoT. This is mostly due to our configuration of CoT, where we do not artificially inflate the lengths of the chains of reasoning if this does not improve the outcomes. The higher costs of GoT and ToT are driven by new thoughts built for each Generate operation; these multiple thoughts are one of the reasons for GoT’s superiority in quality.

Increasing Complexity of Tackled Problems Most importantly, the advantages of GoT in the quality increase for all the baselines with the growing size of the problem . For example, in sorting, while for GoT only negligibly improves upon ToT2, its median error count becomes lower by 61% for and 69% for . The quartiles also become respectively better. The results for other schemes also follow the intuition; for example, IO becomes consistently worse with the increasing , which is expected as a single thought is unlikely to solve a large problem instance. Overall, this analysis illustrates that GoT is indeed well-suited for elaborate problem cases, as the execution schedules usually become more complex with the growing problem sizes.

Figure 7: Number of errors and cost in keyword counting with ChatGPT-3.5. L 𝐿 and k 𝑘 indicate the structure of ToT (see Sections 3.2 6 ).

Figure 8: Score and cost in document merging with ChatGPT-3.5. L 𝐿 and k 𝑘 indicate the structure of ToT (see Sections 3.2 6 ). Number of samples: 50; context size: 16k tokens.

7.3 Discussion on Task Decomposition

When splitting a task into subtasks and then solving these subtasks, the size of responses and the input (in tokens) are reduced proportionally to the degree of the task decomposition. However, the “static” part of the prompt (i.e., few-shot examples) may become a significant overhead (see GoT4 to GoT8 in Figure 7). Here, we observe that these few-shot examples can usually also be reduced in size (e.g., the passages used to demonstrate keyword counting can also be made smaller and still be indicative of the actual input size), thus actively working towards decreasing the cost (e.g., see the difference between GoT8 and GoTx in Figure 7).

The overall goal when conducting graph decomposition is to break down a task to the point, where the LLM can solve it correctly for the majority of time using a single prompt (or with a few additional improvement steps). This significantly lowers the number of improvement/refinement steps needed during the later stages of the graph exploration. Furthermore, as indicated by our results, combining or concatenating subresults is usually an easier task than solving large task instances from scratch. Hence, the LLM is often successful when aggregating the final solution.

8 Related Work

8.1 Prompting Paradigms & Approaches

We detail different prompting paradigms in Section 1 and Table 1. There are numerous other works related to prompting. We now briefly summarize selected most related ones; more extensive descriptions can be found in dedicated surveys 24 25 26 27. Wang et al. proposed Plan-and-Solve, an approach to enhance CoT with an explicit planning stage 28. Using complexity-based criteria to enhance prompting within a CoT was designed by Fu et al. 9 29. The self-taught reasoner (STaR) 30 generates several chain of thoughts, and selects the ones that are valid. Similarly, a scheme by Shum et al. 31 generates a pool of CoT candidates, and selects the best candidate based on whether the candidates match the ground truth and on a policy gradient-based method. Automatic prompt generation overcomes the issues of scaling in CoT 32 33 34. Zhou et al. propose to harness selecting the best prompt out of a candidate set 35. Skeleon-of-Thought 36 generates at first a number of skeleton answers (brief bullet points of 3 to 5 words) and expands on these points in parallel in a second step.

Finally, in prompt chaining, one cascades different LLMs. This enables prompting different LLMs via different contexts, enabling more powerful reasoning 37 38 39 40 41 42 39. GoT is orthogonal to this class of schemes, as it focuses on a single context capabilities.

8.2 Self-Reflection & Self-Evaluation

Self-reflection and self-evaluation were introduced recently 43 44 45 12 46. They are used to enhance different tasks, for example for code generation 47 or computer operation tasks 48. In GoT, we partially rely on self-evaluation when taking decisions on how to expand the graph of thoughts within a prompt.

8.3 LLMs & Planning

There are many works recently on how to plan complex tasks with LLMs 49 50 51 52 53 54. GoT could be seen as a generic framework that could potentially be used to enhance such schemes, by offering a paradigm for generating complex graph-based plans.

8.4 Graphs and Graph Computing

Graphs have become an immensely popular and important part of the general computing landscape 55 56 57 58 59. Recently, there has been a growing interest in domains such as graph databases 60 61 62 63 64, graph pattern matching 65 66 67 22 68 19, graph streaming 69 70 71, and graph machine learning as well as graph neural networks 72 73 74 75 76 72 77 78 79 80 81. The graph abstraction has been fruitful for many modern research domains, such as social sciences (e.g., studying human interactions), bioinformatics (e.g., analyzing protein structures), chemistry (e.g., designing chemical compounds), medicine (e.g., drug discovery), cybersecurity (e.g., identifying intruder machines), healthcare (e.g., exposing groups of people who submit fraudulent claims), web graph analysis (e.g., providing accurate search services), entertainment services (e.g., predicting movie popularity), linguistics (e.g., modeling relationships between words), transportation (e.g., finding efficient routes), physics (e.g., understanding phase transitions and critical phenomena), and many others 55 16 18 82 83. In this work, we harness the graph abstraction as a key mechanism that enhances prompting capabilities in LLMs.

9 Conclusion

Prompt engineering is one of the central new domains of the large language model (LLM) research. It enables using LLMs efficiently, without any model updates. However, designing effective prompts is a challenging task.

In this work, we propose Graph of Thoughts (GoT), a new paradigm that enables the LLM to solve different tasks effectively without any model updates. The key idea is to model the LLM reasoning as an arbitrary graph, where thoughts are vertices and dependencies between thoughts are edges. This enables novel transformations of thoughts, such as aggregation. Human’s task solving is often non-linear, and it involves combining intermediate solutions into final ones, or changing the flow of reasoning upon discovering new insights. GoT reflects this with its graph structure.

GoT outperforms other prompting schemes, for example ensuring 62% increase in the quality of sorting over ToT, while simultaneously reducing costs by 31%. We also propose a novel metric for a prompting scheme, the volume of a thought, to indicate the scope of information that a given LLM output could carry with it, where GoT also excels. This provides a step towards more principled prompt engineering.

The graph abstraction has been the foundation of several successful designs in computing and AI over last decades, for example AlphaFold for protein predictions. Our work harnesses it within the realm of prompt engineering.

Acknowledgements

We thank Hussein Harake, Colin McMurtrie, Mark Klein, Angelo Mangili, and the whole CSCS team granting access to the Ault and Daint machines, and for their excellent technical support. We thank Timo Schneider for help with infrastructure at SPCL. This project received funding from the European Research Council (Project PSAP, No. 101002047), and the European High-Performance Computing Joint Undertaking (JU) under grant agreement No. 955513 (MAELSTROM). This project was supported by the ETH Future Computing Laboratory (EFCL), financed by a donation from Huawei Technologies. This project received funding from the European Union’s HE research and innovation programme under the grant agreement No. 101070141 (Project GLACIATION).

References

Appendix A Positive Score Evaluation

The following figures plot the same data as Figures 5 and 6 respectively, however use the ”positive score” described in Sections 5.1 and 5.2.

Figure 9: Accuracy and cost in sorting tasks with ChatGPT-3.5. L 𝐿 and k 𝑘 indicate the structure of ToT (see Sections 3.2 6 ).

Figure 10: Accuracy and cost in set intersection with ChatGPT-3.5. L 𝐿 and k 𝑘 indicate the structure of ToT (see Sections 3.2 6 ).

Appendix B Example Prompts - Sorting

We present the prompts only for the sorting of 32-element lists, as those for 64-element and 128-element lists are identical, except for the split_prompt where the number of elements in the one-shot example matches the problem size.

For sorting, we employ three distinct types of operations that interact with the LLM, each with its corresponding prompts. First, there is the Generate operation, utilizing the sort_prompt to guide the LLM in sorting a provided list of values, and the split_prompt to direct the LLM to split a specified list into a designated number of sublists. Next, the Improve operation employs the improve_prompt to instruct the LLM to refine a sorted list if it detects mistakes. Finally, the Aggregate operation leverages the merge_prompt to guide the LLM in merging two pre-sorted lists into a single sorted list.

First, we present the prompt stubs (Table 3), serving as templates to dynamically generate appropriate prompts at runtime. For clarity, we display their corresponding few-shot examples separately in Table 4. Following this, we outline the LLM interactions throughout the process of solving the sorting use case (Table 5 - Table 9).

Table 3: Prompt stubs for the sorting tasks; parameters in single curly brackets will be substituted at runtime.

sort_prompt:

Table 4: Few-shot examples for each prompt used for the sorting tasks; some lists are truncated for brevity.

sort_prompt:

Table 5: Sorting of a 32 element list: Execution plan (GoO)

GoO: 1. Split the input list into two sub-lists of equal size (split_prompt) 2. For each sub-list: Sort the sub-list (sort_prompt) five times; score each sort attempt; keep the best 3. Merge the sorted sub-lists into one fully sorted list (merge_prompt) 10 times; score each merge attempt; keep the best 4. Fix any potential mistakes in the sorted list (improve_prompt) 10 times; score each improvement attempt; keep the best

Table 6: Sorting of a 32 element list: Step 1 (Prompt/Response)

Step 1 – Prompt:

Table 7: Sorting of a 32 element list: Step 2 (Prompts/Responses)

Step 2a – Prompt:

Table 8: Sorting of a 32 element list: Step 3 (Prompt/Responses)

Step 3 – Prompt:

Table 9: Sorting of a 32 element list: Step 4 (Prompt/Responses)

Step 4 – Prompt:

Appendix C Example Prompts - Set Intersection

We present the prompts only for the intersection of two 32-element sets, as those for 64-element and 128-element sets are identical, except for the split_prompt where the size of the split is adjusted proportionally.

For set intersection, we employ two distinct types of operations that interact with the LLM, each with its corresponding prompts. First, there is the Generate operation, utilizing the intersect_prompt to guide the LLM in intersecting two input sets, and the split_prompt to direct the LLM to split a specified set into a designated number of distinct subsets. Second, the Aggregate operation leverages the merge_prompt to guide the LLM in combining two sets into one.

First, we present the prompt stubs (Table 10), serving as templates to dynamically generate appropriate prompts at runtime. For clarity, we display their corresponding few-shot examples separately in Table 11. Following this, we outline the LLM interactions throughout a complete set intersection process (Table 12 - Table 15).

Table 10: Prompt stubs for the set intersection tasks; parameters in single curly brackets will be substituted at runtime.

intersect_prompt:

Table 11: Few-shot examples for each prompt used for the set intersection tasks; some lists are truncated for brevity.

intersect_prompt:

Table 12: Intersection of two 32-element sets: Execution plan (GoO)

GoO: 1. Split the second input set into two sub-sets of equal size (split_prompt) 2. For each sub-set: Intersect the sub-set with the first input set (intersect_prompt) five times; score each sort attempt; keep the best 3. Merge the resulting intersections into one full intersection set (merge_prompt) 10 times; score each merge attempt; keep the best

Table 13: Intersection of two 32-element sets: Step 1 (Prompt/Response)

Step 1 – Prompt:

Table 14: Intersection of two 32-element sets: Step 2 (Prompts/Responses)

Step 2a – Prompt:

Table 15: Intersection of two 32-element sets: Step 3 (Prompt/Responses)

Step 3 – Prompt:

Appendix D Example Prompts - Keyword Counting

We present the prompts only for GoT4 of the keyword counting task, as those used for GoT8 and GoTx are identical, except for minor differences in the split_prompt where the size of the split is adjusted.

For keyword counting, we employ three distinct types of operations that interact with the LLM, each with its corresponding prompts. First, there is the Generate operation, utilizing the count_prompt to guide the LLM in counting the keywords in a text, and the split_prompt to direct the LLM to split a given text into a number of passages. Next, the Aggregate operation leverages the merge_prompt to guide the LLM in merging two dictionaries of counted keywords into one. Finally, the ValidateAndImprove operation employs the improve_merge_prompt to instruct the LLM to correct mistakes that were made in a previous Aggregate operation.

We present the prompt stubs (Table 16 - Table 17), serving as templates to dynamically generate appropriate prompts at runtime. For clarity, we display their corresponding few-shot examples separately in Table 18 and Table 19. Following this, we outline the LLM interactions throughout a complete keyword counting process (Table 20 - Table 28).

Table 16: Prompt stubs for the keyword counting task; parameters in single curly brackets will be substituted at runtime.

count_prompt:

Table 17: Prompt stubs for the keyword counting task continued; parameters in single curly brackets will be substituted at runtime.

merge_prompt:

Table 18: Few-shot examples for count prompt used for the keyword counting task; some paragraphs and dictionaries are truncated and formatting is slightly adjusted for brevity.

count_prompt:

Table 19: Few-shot examples for split, merge and improve_merge prompts used for the keyword counting task; some paragraphs and dictionaries are truncated and formatting is slightly adjusted for brevity.

split_prompt:

Table 20: Keyword counting for an example 4-passage split (GoT4): Execution plan (GoO)

GoO: 1. Split the input text into four paragraphs of roughly equal size (split_prompt) 2. For each paragraph: Count the occurrences of individual countries (count_prompt) 10 times; score each counting attempt; keep the best 3. Merge the country counts into one dictionary (merge_prompt) 3 times; validate and improve invalid merge attempts (improve_merge_prompt) up to 3 attempts each; score; keep the best

Table 21: Keyword counting for an example 4-passage split (GoT4): Step 1 (Prompt/Response)

Step 1 – Prompt:

Table 22: Keyword counting for an example 4-passage split (GoT4): Step 2a (Prompt/Responses)

Step 2a – Prompt:

Table 23: Keyword counting for an example 4-passage split (GoT4): Step 2b (Prompt/Responses)

Step 2b – Prompt:

(4 Errors - Missing one ”Iran”, one ”China”, one ”Italy” and one ”France”) 2. {{ “Iran”: 1, “China”: 1, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(4 Errors - Missing one ”Iran”, one ”China”, one ”Italy” and one ”France”) 3. {{ “Iran”: 3, “China”: 2, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(3 Errors - Surplus of one ”Iran”, missing one ”Italy” and one ”France”) 4. {{ “Iran”: 1, “China”: 1, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(4 Errors - Missing one ”Iran”, one ”China”, one ”Italy” and one ”France”) 5. {{ “Iran”: 2, “China”: 2, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(2 Errors - Missing one ”Italy” and one ”France”) 6. {{ “Iran”: 1, “China”: 1, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(4 Errors - Missing one ”Iran”, one ”China”, one ”Italy” and one ”France”) 7. {{ “Iran”: 1, “China”: 1, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(4 Errors - Missing one ”Iran”, one ”China”, one ”Italy” and one ”France”) 8. {{ “Iran”: 1, “China”: 1, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(4 Errors - Missing one ”Iran”, one ”China”, one ”Italy” and one ”France”) 9. {{ “Iran”: 1, “China”: 1, “Italy”: 1, “France”: 1, “United States”: 1, “Canada”: 1 }}

(4 Errors - Missing one ”Iran”, one ”China”, one ”Italy” and one ”France”) 10. {{ “Iran”: 2, “China”: 2, “Italy”: 1, “France”: 2, “United States”: 1, “Canada”: 1 }}

(1 Error - Missing one ”Italy”)

Table 24: Keyword counting for an example 4-passage split (GoT4): Step 2c (Prompt/Responses)

Step 2c – Prompt:

(Fully Correct) 2. {{ “United States”: 1, “Canada”: 1, “Australia”: 2, “New Zealand”: 2, “Ukraine”: 1, “Poland”: 1 }}

(Fully Correct) 3. {{ “United States”: 1, “Canada”: 1, “Australia”: 1, “New Zealand”: 1, “Australia and New Zealand”: 1, “Ukraine”: 1, “Poland”: 1 }}

(3 Errors - Missing one ”Australia, one ”New Zealand” and surplus of one ”Australia and New Zealand”) 4. {{ “United States”: 1, “Canada”: 1, “Australia”: 2, “New Zealand”: 2, “Ukraine”: 1, “Poland”: 1 }}

(Fully Correct) 5. {{ “United States”: 1, “Canada”: 1, “Australia”: 1, “New Zealand”: 1, “Australia and New Zealand”: 1, “Ukraine”: 1, “Poland”: 1 }}

(3 Errors - Missing one ”Australia, one ”New Zealand” and surplus of one ”Australia and New Zealand”) 6. {{ “United States”: 1, “Canada”: 1, “Australia”: 1, “New Zealand”: 1, “Ukraine”: 1, “Poland”: 1 }}

(2 Errors - Missing one ”Australia” and one ”New Zealand”) 7. {{ “United States”: 1, “Canada”: 1, “Australia”: 2, “New Zealand”: 2, “Ukraine”: 1, “Poland”: 1 }}

(Fully Correct) 8. {{ “United States”: 1, “Canada”: 1, “Australia”: 1, “New Zealand”: 1, “Ukraine”: 1, “Poland”: 1 }}

(2 Errors - Missing one ”Australia” and one ”New Zealand”) 9. {{ “United States”: 1, “Canada”: 1, “Australia”: 2, “New Zealand”: 2, “Ukraine”: 1, “Poland”: 1 }}

(Fully Correct) 10. {{ “United States”: 1, “Canada”: 1, “Australia”: 2, “New Zealand”: 2, “Ukraine”: 1, “Poland”: 1 }}

(Fully Correct)

Table 25: Keyword counting for an example 4-passage split (GoT4): Step 2d (Prompt/Responses)

Step 2d – Prompt:

Table 26: Keyword counting for an example 4-passage split (GoT4): Step 3a (Prompt/Responses)

Step 3a – Prompt:

Table 27: Keyword counting for an example 4-passage split (GoT4): Step 3b (Prompt/Responses)

Step 3b – Prompt:

Table 28: Keyword counting for an example 4-passage split (GoT4): Step 3c (Prompt/Responses)

Step 3c – Prompt:

Appendix E Example Prompts - Document Merging

We present the prompts only for GoT of the document merging task, as GoT2 only differs in the fact that it merges the 4 NDAs in 2 steps rather than 1. For document merging, we employ four distinct types of operations that interact with the LLM, each with its corresponding prompts. First, there is the Generate operation, utilizing the merge_prompt to instruct the LLM to merge the 4 NDAs into 1. Second, the Score operations instructs the LLM to score a given merged NDA using the score_prompt. Next, the Aggregate operation employs the aggregate_prompt to instruct the LLM to aggregate multiple merge attempts into a single, better one. Finally, the Improve operation leverages the improve_prompt to instruct the LLM to improve a merged NDA.

First, we present the prompt stubs (Table 29 - Table 30), serving as templates to dynamically generate appropriate prompts at runtime. Following this, we outline the LLM interactions throughout a complete merging process (Table 31 - Table 49). However, instead of displaying each input/generated NDA in every prompt/response, we present the 4 input NDAs in Table 31 - Table 33 and the final merged NDA in Table 49. Furthermore, as scoring is done using the LLM as well, we will present these interactions for the best performing merged NDAs (Tables 39 - 40 and Tables 47 - 48). Lastly, most responses are limited to a few lines only, as they don’t offer any further insights and would otherwise span multiple pages. However, we refer the interested reader to the results in the corresponding code repository 2 for full logs and further examples.

Table 29: Prompt stubs for the document merging task; parameters in single curly brackets will be substituted at runtime.

merge_prompt: Merge the following 4 NDA documents merges NDAs in terms of how much redundant information is contained, independent of the original NDAs, as well as how much information is retained from the original NDAs. A score of 10 for redundancy implies that absolutely no information is redundant, while a score of 0 implies that at least half of the information is redundant (so everything is at least mentioned twice). A score of 10 for retained information implies that all information from the original NDAs is retained, while a score of 0 implies that no information is retained. You may provide reasoning for your scoring, but the final score for redundancy should be between the tags : {s} aggregate_prompt: The following NDAs

Table 30: Prompt stubs for the document merging task continued; parameters in single curly brackets will be substituted at runtime.

improve_prompt: The following NDA merges initial NDAs by adding more information and removing redundancy. Output only the improved NDA, placed between the tags : {s}

Table 31: Input NDA 1 and 2

Table 32: Input NDA 3

Table 33: Input NDA 4

Table 34: Merging 4 example NDAs: Execution plan (GoO)

GoO: 1. Merge the 4 NDAs into a single one 5 times; Score each attempt and keep the best 3 2. Aggregate the merge attempts into a single one 5 times; Score each aggregation attempt and keep the overall best attempt (including Step 1) 3. Improve the merged NDA 10 times; Score each and keep the best

Table 35: Merging 4 example NDAs: Step 1 (Prompt)

Merge the following 4 NDA documents

Table 36: Merging 4 example NDAs; we only present the highest scoring response fully in Tables 37-38: Step 1 (Responses)

Note that due to the shortening of responses, the appear to be equal, but small differences can be found when observing the fully generated summaries. The only small differences are reflected in the very similar scores (see Table 39-40 for the scoring of the best response). Response (1/5):

Table 37: Merging 4 example NDAs: Step 1 (Highest Scoring Response Part 1)

NON-DISCLOSURE AGREEMENT (NDA) 1. Agreement between [Your Company Name] and [Recipient Name] on [Date]. 2. Information sharing for the purpose of [specific project or purpose]. 3. ”Confidential Information” includes all potentially commercially valuable information, specifically software development tactics, processes, and in-house research results. 4. Receiving party is obligated to protect the Confidential Information, use it solely for the disclosed purpose, and not disclose it without consent. 5. Breach penalties include injunctive relief, other remedies, and a $200,000 fee per breach. 6. The Agreement applies to the Parties and their successors and assigns. It contains all related agreements and lack of enforcement doesn’t imply waiver. 7. The Agreement is under the laws of [State]. 8. Signed by [Your Company Name] and [Recipient Name] at the above date. Effective from [Effective Date], this NDA involves [Your Company Name] (”Disclosing Party”), and [Recipient Name] (”Receiving Party”). Purpose: The Disclosing Party will disclose confidential information related to [Topic of Research] to the Receiving Party for [Purpose]. Confidential Information: Defined as all non-public reports, data, designs, and other materials provided by the Disclosing Party to the Receiving Party. Receiving Party’s Obligations: a. Use, reproduce, or distribute the confidential information only for the agreed purpose. b. Restrict access to the information to necessary parties, ensuring they abide by strict confidentiality. c. Return or destroy all confidential information upon request or at the end of the agreement. Exclusions: Information will not be classified as confidential if it is already known to the Receiving Party, publicly known, or independently developed by the Receiving Party. Non-Competition: The Receiving Party will not engage in any competing business against the Disclosing Party during the agreement and one year after its termination. Term and Termination: The agreement is valid for [e.g., ”two years”], unless terminated earlier with [e.g., ”30 days”] written notice. The Receiving Party’s non-disclosure and non-competition obligations persist post-termination. General Provisions: a. Governing Law: [Your State]’s laws apply. b. Amendments: Only valid if written and signed by both parties. c. Entire Agreement: This contract overrules previous related agreements. Signed as of the Effective Date by [Your Company Name] - Disclosing Party [Recipient Name] - Receiving Party. Entities Involved: Effective [Date], between [AquaBlue Innovations], established in [State], and [PineTree Solutions], a registered entity. Objective: To safeguard classified data during talks of a potential technological alliance.

Table 38: Merging 4 example NDAs: Step 1 (Highest Scoring Response Part 2)

Specification of Protected Information: Particularly: a. System designs and architectural schematics. b. Proprietary computational algorithms. Receiver’s Obligations: a. Maintain strict non-disclosure using best practices. b. Employ solely for the aforementioned aim. c. No unveiling without explicit authorization. Violation Ramifications: A charge of 280,000 for every infringement, plus possible legal proceedings. General Terms: Binding for both parties and any successors. This encapsulates the entire accord. Legal Reference: Governed as per \[State\]’s legal framework. Attestation: Duly signed on \[Date\]. Contracting Parties: Dated \[Date\], drawn between \[AquaBlue Innovations\], a \[State\]-based corporation, and \[PineTree Solutions\], a licensed organization. Aim: To protect exclusive insights amidst dialogues for a technological partnership. Categorization of Sensitive Data: Includes: a. Internal software blueprints. b. Intellectual property awaiting patents. Commitments of Recipient: a. Uphold confidentiality, ensuring data integrity. b. Utilize strictly for collaborative ventures. c. No exposure without prior consensus. Repercussions for Non-Compliance: 295,000 fine for each transgression, and the option for legal recourse. Overall Provisions: Legally enforceable for signatories and successors. Complete and sole agreement. Juridical Standpoint: Under the auspices of [State] laws. Ratification: Confirmed and endorsed on [Date].

Table 39: Merging 4 example NDAs: Step 1 (Scoring Example – Prompt)

Please score the merged NDA in terms of how much redundant information is contained, independent of the original NDAs, as well as how much information is retained from the original NDAs. A score of 10 for redundancy implies that absolutely no information is redundant, while a score of 0 implies that at least half of the information is redundant (so everything is at least mentioned twice). A score of 10 for retained information implies that all information from the original NDAs is retained, while a score of 0 implies that no information is retained. You may provide reasoning for your scoring, but the final score for redundancy should be between the tags : NON-DISCLOSURE AGREEMENT (NDA) 1. Agreement between [Your Company Name] and [Recipient Name] on [Date]. 2. Information sharing for the purpose of [specific project or purpose]. (Omitted, see Tables 37-38)

Table 40: Merging 4 example NDAs: Step 1 (Scoring Example – Responses)

Response (1/3): contains multiple redundant sections from the original NDAs. For example, the introductory sections, such as the agreement between the parties and the purpose of information sharing, are repeated multiple times. Additionally, sections related to the definition of confidential information, the recipient’s obligations, exclusions, non-competition, term and termination, and general provisions are also redundantly included. However, in terms of retained information, the merged NDA retains all the key elements from the original NDAs, including the parties involved, the objective, specification of protected information, recipient’s obligations, violation ramifications, general terms, legal reference, and attestation. Overall, while there is redundancy in the merged NDA, it successfully retains all the necessary information from the original NDAs. Response (3/3):

Table 41: Merging 4 example NDAs; Note that S1-S3 are the three best scoring merge attemps from the previous step: Step 2 (Prompt)

The following NDAs

Table 42: Merging 4 example NDAs: Step 2 (Responses)

For this run, none of the responses improve upon the previous best and thus will all be discarded by the next step. For that reason and due to their long length, we do not present them fully and exclude their scoring. Response (1/5):

Table 43: Merging 4 example NDAs: Step 3 (Prompt)

The following NDA merges initial NDAs by adding more information and removing redundancy. Output only the improved NDA, placed between the tags : NON-DISCLOSURE AGREEMENT (NDA) 1. Agreement between [Your Company Name] and [Recipient Name] on [Date]. 2. Information sharing for the purpose of [specific project or purpose]. (Omitted, see Tables 37-38)

Table 44: Merging 4 example NDAs; we only present the highest scoring response fully in Table 46: Step 3 (Responses Part 1)

Response (1/10):

Table 45: Merging 4 example NDAs; we only present the highest scoring response fully in Table 46: Step 3 (Responses Part 2)

Response (6/10):

Table 46: Merging 4 example NDAs: Step 3 (Highest Scoring Response)

NON-DISCLOSURE AGREEMENT (NDA) 1. Agreement between [Your Company Name] and [Recipient Name] on [Date] for the purpose of [specific project or purpose]. 2. ”Confidential Information” includes all potentially commercially valuable information, such as software development tactics, processes, in-house research results, system designs, architectural schematics, proprietary computational algorithms, internal software blueprints, and intellectual property awaiting patents. 3. Receiving party is obligated to protect the Confidential Information, use it solely for the disclosed purpose, and not disclose it without consent. 4. Breach penalties include injunctive relief, other remedies, and a fine per breach (e.g., 280,000 for each infringement, depending on the agreement). 5. The Agreement applies to the Parties and their successors and assigns. It contains all related agreements and lack of enforcement doesn’t imply waiver. 6. The Agreement is under the laws of [State] and is valid for a specified period (e.g., two years) unless terminated earlier with written notice (e.g., 30 days). 7. The Receiving Party agrees not to engage in any competing business against the Disclosing Party during the agreement and a specified period after its termination (e.g., one year). 8. The Agreement can only be amended if written and signed by both parties, and overrules previous related agreements. 9. The Agreement is legally enforceable and binding for signatories and successors. 10. The Agreement is governed by the laws of [State]. Signed as of the Effective Date by [Your Company Name] - Disclosing Party [Recipient Name] - Receiving Party, along with the other parties involved.

Table 47: Merging 4 example NDAs: Step 3 (Scoring Example – Prompt)

Please score the merged NDA in terms of how much redundant information is contained, independent of the original NDAs, as well as how much information is retained from the original NDAs. A score of 10 for redundancy implies that absolutely no information is redundant, while a score of 0 implies that at least half of the information is redundant (so everything is at least mentioned twice). A score of 10 for retained information implies that all information from the original NDAs is retained, while a score of 0 implies that no information is retained. You may provide reasoning for your scoring, but the final score for redundancy should be between the tags : NON-DISCLOSURE AGREEMENT (NDA) 1. Agreement between [Your Company Name] and [Recipient Name] on [Date] for the purpose of [specific project or purpose]. 2. ”Confidential Information” includes all potentially commercially valuable information, such as software development tactics, processes, in-house research results, system designs, architectural schematics, proprietary computational algorithms, internal software blueprints, and intellectual property awaiting patents. (Omitted, see Table 46)

Table 48: Merging 4 example NDAs: Step 3 (Scoring Example – Responses)

Response (1/3): retains most of the information from the original NDAs, combining relevant clauses and terms into a single comprehensive agreement. However, some redundancy is present as similar information is mentioned multiple times, such as the definition of ”Confidential Information” and the obligations of the receiving party. Additionally, some specific details from the original NDAs are not included in the merged NDA, such as the exclusion criteria in Doc2 and the provisions for non-competition in Doc3 and Doc4. Nonetheless, the merged NDA captures the essence of the original NDAs and includes all key information necessary for a non-disclosure agreement. Response (2/3): retains all the key information from the original NDAs, including the purpose of the agreement, the definition of confidential information, the obligations of the receiving party, breach penalties, applicability to parties and successors, governing law, and signature requirements. There is some redundant information, such as the inclusion of system designs, architectural schematics, proprietary computational algorithms, internal software blueprints, and intellectual property awaiting patents from both Doc3 and Doc4. However, this redundancy is minor and does not significantly impact the overall usefulness of the NDA. Overall, the merged NDA retains all important information while minimizing redundancy, making it highly effective in conveying the necessary terms and obligations of the agreement. Therefore, it receives a score of 8 for redundancy and a score of 10 for retained information. Response (3/3): contains some redundant information, such as the definition of ”Confidential Information” and the obligations of the receiving party. However, it retains all the important information from the original NDAs, including the purpose of the agreement, the specific types of confidential information, the breach penalties, the applicability to parties and successors, the governing law, and the provisions regarding non-competition, amendment, and termination. Additionally, it incorporates the additional information about the agreement’s validity period and the option for legal recourse in case of non-compliance. Therefore, the merged NDA scores a 7 for redundancy and a 10 for retained information. Final Overall Score (Harmonic Mean of Averages): 7.78

Table 49: Merging 4 example NDAs: Final Merged NDA

NON-DISCLOSURE AGREEMENT (NDA) 1. Agreement between [Your Company Name] and [Recipient Name] on [Date] for the purpose of [specific project or purpose]. 2. ”Confidential Information” includes all potentially commercially valuable information, such as software development tactics, processes, in-house research results, system designs, architectural schematics, proprietary computational algorithms, internal software blueprints, and intellectual property awaiting patents. 3. Receiving party is obligated to protect the Confidential Information, use it solely for the disclosed purpose, and not disclose it without consent. 4. Breach penalties include injunctive relief, other remedies, and a fine per breach (e.g., 280,000 for each infringement, depending on the agreement). 5. The Agreement applies to the Parties and their successors and assigns. It contains all related agreements and lack of enforcement doesn’t imply waiver. 6. The Agreement is under the laws of [State] and is valid for a specified period (e.g., two years) unless terminated earlier with written notice (e.g., 30 days). 7. The Receiving Party agrees not to engage in any competing business against the Disclosing Party during the agreement and a specified period after its termination (e.g., one year). 8. The Agreement can only be amended if written and signed by both parties, and overrules previous related agreements. 9. The Agreement is legally enforceable and binding for signatories and successors. 10. The Agreement is governed by the laws of [State]. Signed as of the Effective Date by [Your Company Name] - Disclosing Party [Recipient Name] - Receiving Party, along with the other parties involved.

Appendix F Evaluation - GoT Configurations

We detail the concrete operations that GoT was configured with to solve the set intersection and sorting use cases.

Listing 1 GoT configuration for the set intersection use case with 32 elements

Generate(k=1) # Split second set into two halves of 16 elements

foreach subset:

Generate(k=5) # Determine intersected subset of subset and first input set

Score(k=1) # Score locally the intersected subsets

KeepBestN(1) # Keep the best intersected subset

Aggregate(10) # Merge both intersected subsets

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

GroundTruth() # Compare to precomputed result

Listing 2 GoT configuration for the set intersection use case with 64 elements

Generate(k=1) # Split second set into four parts of 16 elements

foreach subset:

Generate(k=5) # Determine intersected subset of subset and first input set

Score(k=1) # Score locally the intersected subsets

KeepBestN(1) # Keep the best intersected subset

merge step 1:

Aggregate(10) # Merge intersected subsets 1 and 2

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

merge step 2:

Aggregate(10) # Merge intersected subsets 3 and 4

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

final merge:

Aggregate(10) # Merge intermediate intersected subsets from merge step 1 and 2

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

GroundTruth() # Compare to precomputed result

Listing 3 GoT configuration for the set intersection use case with 128 elements

Generate(k=1) # Split second set into eight parts of 16 elements

foreach subset:

Generate(k=5) # Determine intersected subset of subset and first input set

Score(k=1) # Score locally the intersected subsets

KeepBestN(1) # Keep the best intersected subset

merge step 1:

Aggregate(5) # Merge intersected subsets 1 and 2

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

merge step 2:

Aggregate(5) # Merge intersected subsets 3 and 4

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

merge step 3:

Aggregate(5) # Merge intersected subsets 5 and 6

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

merge step 4:

Aggregate(5) # Merge intersected subsets 7 and 8

Score(k=1) # Score locally the intersected result sets

Listing 4 GoT configuration for the set intersection use case with 128 elements (cont.)

KeepBestN(1) # Keep the best result

merge step 5:

Aggregate(5) # Merge intermediate intersected subsets from merge step 1 and 2

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

merge step 6:

Aggregate(5) # Merge intermediate intersected subsets from merge step 3 and 4

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

final merge:

Aggregate(5) # Merge intermediate intersected subsets from merge step 5 and 6

Score(k=1) # Score locally the intersected result sets

KeepBestN(1) # Keep the best result

GroundTruth() # Compare to precomputed result

Listing 5 GoT configuration for the sorting use case with 32 elements

Generate(k=1) # Split list into two halves of 16 elements

foreach list part:

Generate(k=5) # Sort list part

Score(k=1): # Score partially sorted list

KeepBestN(1): # Keep the best partially sorted list

Aggregate(10) # Merge both partially sorted lists

Score(k=1) # Score locally the sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=10) # Try to improve solution

Score(k=1) # Score locally the sorted result lists

KeepBestN(1) # Keep the best result

GroundTruth() # Compare to precomputed result

Listing 6 GoT configuration for the sorting use case with 64 elements

Generate(k=1) # Split list into four parts of 16 elements

foreach list part:

Generate(k=5) # Sort list part

Score(k=1) # Score partially sorted list

KeepBestN(1) # Keep the best partially sorted list

merge step 1:

Aggregate(10) # Merge partially sorted lists 1 and 2

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

merge step 2:

Aggregate(10) # Merge partially sorted lists 3 and 4

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

final merge:

Aggegrate(10) # Merge partially sorted lists from merge step 1 and 2

Score(k=1) # Score locally the sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=10) # Try to improve solution

Score(k=1) # Score locally the sorted result lists

KeepBestN(1) # Keep the best result

GroundTruth() # Compare to precomputed result

Listing 7 GoT configuration for the sorting use case with 128 elements

Generate(k=1) # Split list into eight parts of 16 elements

foreach list part:

Generate(k=5) # Sort list part

Score(k=1) # Score partially sorted list

KeepBestN(1) # Keep the best partially sorted list

merge step 1:

Aggregate(10) # Merge partially sorted lists 1 and 2

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

merge step 2:

Aggregate(10) # Merge partially sorted lists 3 and 4

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

merge step 3:

Aggregate(10) # Merge partially sorted lists 5 and 6

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

merge step 4:

Aggregate(10) # Merge partially sorted lists 7 and 8

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

merge step 5:

Aggregate(10) # Merge partially sorted lists from merge step 1 and 2

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

merge step 6:

Aggregate(10) # Merge partially sorted lists from merge step 3 and 4

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=5) # Try to improve the partial solution

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1 # Keep the best result

final merge:

Aggregate(10) # Merge partially sorted lists from merge step 5 and 6

Score(k=1) # Score locally the partially sorted result lists

KeepBestN(1) # Keep the best result

Generate(k=10) # Try to improve solution

Score(k=1) # Score locally the sorted result lists

KeepBestN(1) # Keep the best result

GroundTruth() # Compare to precomputed result

Footnotes

-

Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A. N.; Kaiser, Ł.; and Polosukhin, I. 2017. Attention is All you Need. In Advances in Neural Information Processing Systems (NIPS ’17), volume 30. Curran Associates. ↩

-

Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; and Sutskever, I. 2019. Language Models are Unsupervised Multitask Learners. https://openai.com/research/better-language-models. Accessed: 2023-09-06. ↩

-

Radford, A.; Narasimhan, K.; Salimans, T.; and Sutskever, I. 2018. Improving Language Understanding by Generative Pre-Training. https://openai.com/research/language-unsupervised. Accessed: 2023-09-06. ↩

-

Bubeck, S.; Chandrasekaran, V.; Eldan, R.; Gehrke, J.; Horvitz, E.; Kamar, E.; Lee, P.; Lee, Y. T.; Li, Y.; Lundberg, S.; Nori, H.; Palangi, H.; Ribeiro, M. T.; and Zhang, Y. 2023. Sparks of Artificial General Intelligence: Early experiments with GPT-4. arXiv:2303.12712. ↩

-

Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J. D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; Agarwal, S.; Herbert-Voss, A.; Krueger, G.; Henighan, T.; Child, R.; Ramesh, A.; Ziegler, D.; Wu, J.; Winter, C.; Hesse, C.; Chen, M.; Sigler, E.; Litwin, M.; Gray, S.; Chess, B.; Clark, J.; Berner, C.; McCandlish, S.; Radford, A.; Sutskever, I.; and Amodei, D. 2020. Language Models are Few-Shot Learners. In Advances in Neural Information Processing Systems (NeurIPS ’20), volume 33, 1877–1901. Curran Associates. ↩

-

Chowdhery, A.; Narang, S.; Devlin, J.; Bosma, M.; Mishra, G.; Roberts, A.; Barham, P.; Chung, H. W.; Sutton, C.; Gehrmann, S.; Schuh, P.; Shi, K.; Tsvyashchenko, S.; Maynez, J.; Rao, A.; Barnes, P.; Tay, Y.; Shazeer, N.; Prabhakaran, V.; Reif, E.; Du, N.; Hutchinson, B.; Pope, R.; Bradbury, J.; Austin, J.; Isard, M.; Gur-Ari, G.; Yin, P.; Duke, T.; Levskaya, A.; Ghemawat, S.; Dev, S.; Michalewski, H.; Garcia, X.; Misra, V.; Robinson, K.; Fedus, L.; Zhou, D.; Ippolito, D.; Luan, D.; Lim, H.; Zoph, B.; Spiridonov, A.; Sepassi, R.; Dohan, D.; Agrawal, S.; Omernick, M.; Dai, A. M.; Pillai, T. S.; Pellat, M.; Lewkowycz, A.; Moreira, E.; Child, R.; Polozov, O.; Lee, K.; Zhou, Z.; Wang, X.; Saeta, B.; Diaz, M.; Firat, O.; Catasta, M.; Wei, J.; Meier-Hellstern, K.; Eck, D.; Dean, J.; Petrov, S.; and Fiedel, N. 2022. PaLM: Scaling Language Modeling with Pathways. arXiv:2204.02311. ↩

-

Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.-A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; Rodriguez, A.; Joulin, A.; Grave, E.; and Lample, G. 2023a. LLaMA: Open and Efficient Foundation Language Models. arXiv:2302.13971. ↩

-

Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Chi, E.; Le, Q.; and Zhou, D. 2022. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. arXiv:2201.11903. ↩ ↩2 ↩3

-

Wang, X.; Wei, J.; Schuurmans, D.; Le, Q. V.; Chi, E. H.; Narang, S.; Chowdhery, A.; and Zhou, D. 2023b. Self-Consistency Improves Chain of Thought Reasoning in Language Models. In Proceedings of the Eleventh International Conference on Learning Representations, ICLR ’23. ↩ ↩2 ↩3 ↩4

-

Long, J. 2023. Large Language Model Guided Tree-of-Thought. arXiv:2305.08291. ↩ ↩2 ↩3

-

Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T. L.; Cao, Y.; and Narasimhan, K. R. 2023a. Tree of Thoughts: Deliberate Problem Solving with Large Language Models. In Advances in Neural Information Processing Systems (NeurIPS ’23), volume 36. Curran Associates. ↩ ↩2 ↩3 ↩4

-