Yaron Lipman 1,2 Ricky T. Q. Chen 1 Heli Ben-Hamu 2 Maximilian Nickel 1 Matt Le 1

1 Meta AI (FAIR) 2 Weizmann Institute of Science

Abstract

We introduce a new paradigm for generative modeling built on Continuous Normalizing Flows (CNFs), allowing us to train CNFs at unprecedented scale. Specifically, we present the notion of Flow Matching (FM), a simulation-free approach for training CNFs based on regressing vector fields of fixed conditional probability paths. Flow Matching is compatible with a general family of Gaussian probability paths for transforming between noise and data samples—which subsumes existing diffusion paths as specific instances. Interestingly, we find that employing FM with diffusion paths results in a more robust and stable alternative for training diffusion models. Furthermore, Flow Matching opens the door to training CNFs with other, non-diffusion probability paths. An instance of particular interest is using Optimal Transport (OT) displacement interpolation to define the conditional probability paths. These paths are more efficient than diffusion paths, provide faster training and sampling, and result in better generalization. Training CNFs using Flow Matching on ImageNet leads to consistently better performance than alternative diffusion-based methods in terms of both likelihood and sample quality, and allows fast and reliable sample generation using off-the-shelf numerical ODE solvers.

1 Introduction

Deep generative models are a class of deep learning algorithms aimed at estimating and sampling from an unknown data distribution. The recent influx of amazing advances in generative modeling, e.g., for image generation 1 2, is mostly facilitated by the scalable and relatively stable training of diffusion-based models 3 4. However, the restriction to simple diffusion processes leads to a rather confined space of sampling probability paths, resulting in very long training times and the need to adopt specialized methods (e.g., 5 6) for efficient sampling.

In this work we consider the general and deterministic framework of Continuous Normalizing Flows (CNFs; 7). CNFs are capable of modeling arbitrary probability path

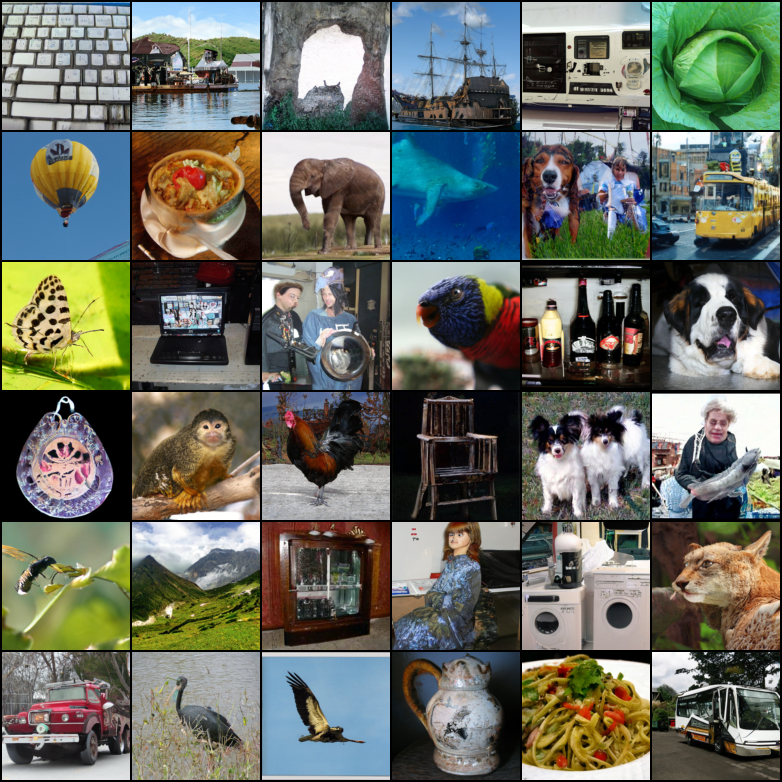

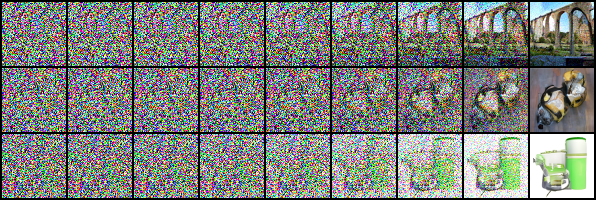

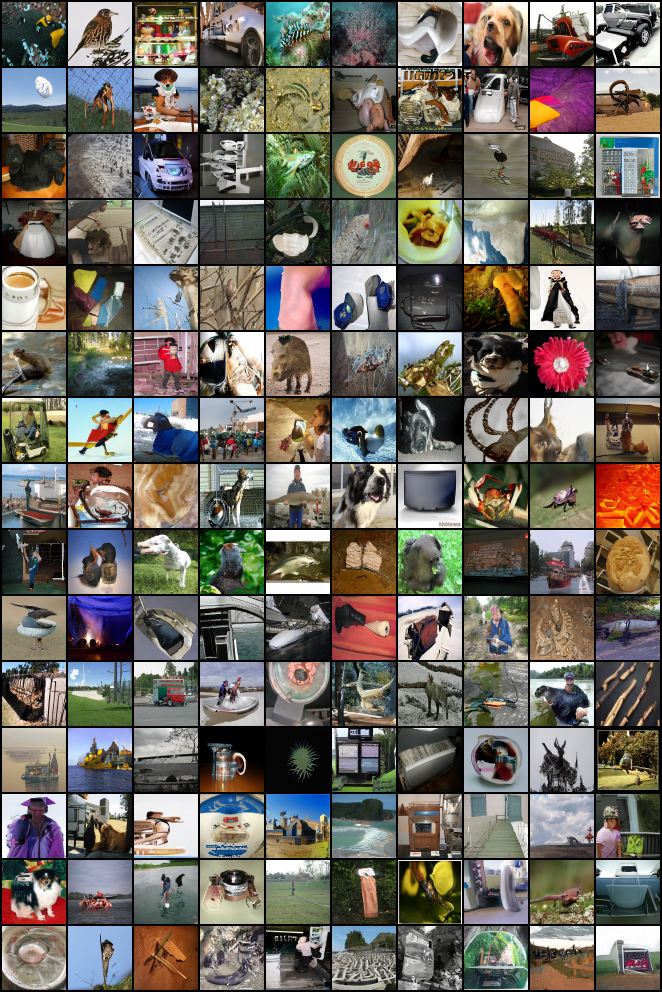

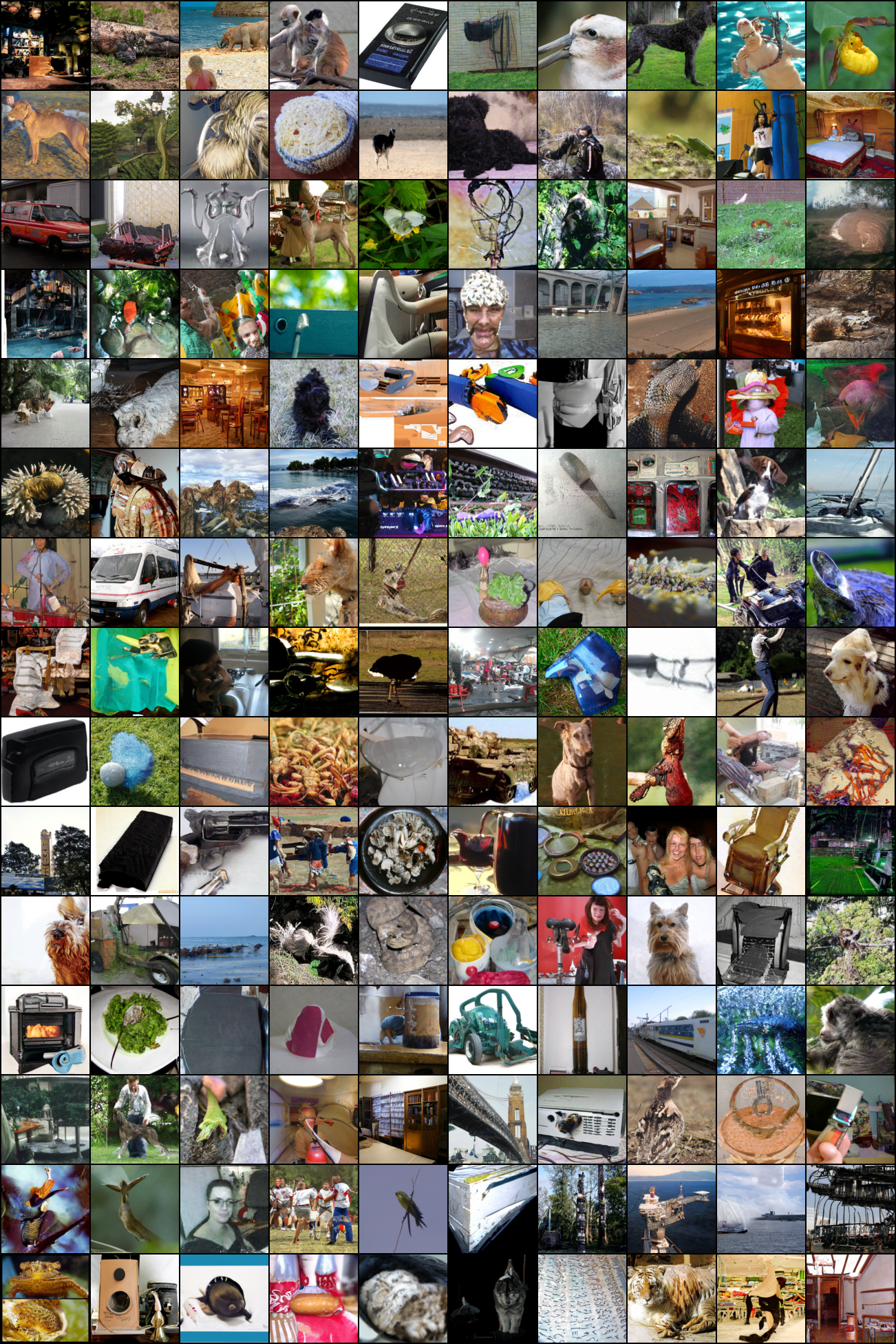

Figure 1: Unconditional ImageNet-128 samples of a CNF trained using Flow Matching with Optimal Transport probability paths.

and are in particular known to encompass the probability paths modeled by diffusion processes 8. However, aside from diffusion that can be trained efficiently via, e.g., denoising score matching 9, no scalable CNF training algorithms are known. Indeed, maximum likelihood training (e.g., 10) require expensive numerical ODE simulations, while existing simulation-free methods either involve intractable integrals 11 or biased gradients 12.

The goal of this work is to propose Flow Matching (FM), an efficient simulation-free approach to training CNF models, allowing the adoption of general probability paths to supervise CNF training. Importantly, FM breaks the barriers for scalable CNF training beyond diffusion, and sidesteps the need to reason about diffusion processes to directly work with probability paths.

In particular, we propose the Flow Matching objective (Section 3), a simple and intuitive training objective to regress onto a target vector field that generates a desired probability path. We first show that we can construct such target vector fields through per-example (i.e., conditional) formulations. Then, inspired by denoising score matching, we show that a per-example training objective, termed Conditional Flow Matching (CFM), provides equivalent gradients and does not require explicit knowledge of the intractable target vector field. Furthermore, we discuss a general family of per-example probability paths (Section 4) that can be used for Flow Matching, which subsumes existing diffusion paths as special instances. Even on diffusion paths, we find that using FM provides more robust and stable training, and achieves superior performance compared to score matching. Furthermore, this family of probability paths also includes a particularly interesting case: the vector field that corresponds to an Optimal Transport (OT) displacement interpolant 13. We find that conditional OT paths are simpler than diffusion paths, forming straight line trajectories whereas diffusion paths result in curved paths. These properties seem to empirically translate to faster training, faster generation, and better performance.

We empirically validate Flow Matching and the construction via Optimal Transport paths on ImageNet, a large and highly diverse image dataset. We find that we can easily train models to achieve favorable performance in both likelihood estimation and sample quality amongst competing diffusion-based methods. Furthermore, we find that our models produce better trade-offs between computational cost and sample quality compared to prior methods. Figure 1 depicts selected unconditional ImageNet 128 128 samples from our model.

2 Preliminaries: Continuous Normalizing Flows

Let denote the data space with data points . Two important objects we use in this paper are: the probability density path , which is a time dependent 1 probability density function, i.e., , and a time-dependent vector field, . A vector field can be used to construct a time-dependent diffeomorphic map, called a flow, , defined via the ordinary differential equation (ODE):

Previously, 7 suggested modeling the vector field with a neural network, , where are its learnable parameters, which in turn leads to a deep parametric model of the flow , called a Continuous Normalizing Flow (CNF). A CNF is used to reshape a simple prior density (e.g., pure noise) to a more complicated one, , via the push-forward equation

where the push-forward (or change of variables) operator is defined by

A vector field is said to generate a probability density path if its flow satisfies equation 3. One practical way to test if a vector field generates a probability path is using the continuity equation, which is a key component in our proofs, see Appendix B. We recap more information on CNFs, in particular how to compute the probability at an arbitrary point in Appendix C.

3 Flow Matching

Let denote a random variable distributed according to some unknown data distribution . We assume we only have access to data samples from but have no access to the density function itself. Furthermore, we let be a probability path such that is a simple distribution, e.g., the standard normal distribution , and let be approximately equal in distribution to . We will later discuss how to construct such a path. The Flow Matching objective is then designed to match this target probability path, which will allow us to flow from to .

Given a target probability density path and a corresponding vector field , which generates , we define the Flow Matching (FM) objective as

where denotes the learnable parameters of the CNF vector field (as defined in Section 2), (uniform distribution), and . Simply put, the FM loss regresses the vector field with a neural network . Upon reaching zero loss, the learned CNF model will generate .

Flow Matching is a simple and attractive objective, but naïvely on its own, it is intractable to use in practice since we have no prior knowledge for what an appropriate and are. There are many choices of probability paths that can satisfy , and more importantly, we generally don’t have access to a closed form that generates the desired . In this section, we show that we can construct both and using probability paths and vector fields that are only defined per sample, and an appropriate method of aggregation provides the desired and . Furthermore, this construction allows us to create a much more tractable objective for Flow Matching.

3.1 Constructing pt,utsubscript𝑝𝑡subscript𝑢𝑡p_{t},u_{t} from conditional probability paths and vector fields

A simple way to construct a target probability path is via a mixture of simpler probability paths: Given a particular data sample we denote by a conditional probability path such that it satisfies at time , and we design at to be a distribution concentrated around , e.g., , a normal distribution with mean and a sufficiently small standard deviation . Marginalizing the conditional probability paths over give rise to the marginal probability path

where in particular at time , the marginal probability is a mixture distribution that closely approximates the data distribution ,

Interestingly, we can also define a marginal vector field, by “marginalizing” over the conditional vector fields in the following sense (we assume for all and ):

where is a conditional vector field that generates . It may not seem apparent, but this way of aggregating the conditional vector fields actually results in the correct vector field for modeling the marginal probability path.

Our first key observation is this:

The marginal vector field (equation 8) generates the marginal probability path (equation 6).

This provides a surprising connection between the conditional VFs (those that generate conditional probability paths) and the marginal VF (those that generate the marginal probability path). This connection allows us to break down the unknown and intractable marginal VF into simpler conditional VFs, which are much simpler to define as these only depend on a single data sample. We formalize this in the following theorem.

Theorem 1.

Given vector fields that generate conditional probability paths , for any distribution , the marginal vector field in equation 8 generates the marginal probability path in equation 6, i.e., and satisfy the continuity equation (equation 26).

The full proofs for our theorems are all provided in Appendix A. Theorem 1 can also be derived from the Diffusion Mixture Representation Theorem in 14 that provides a formula for the marginal drift and diffusion coefficients in diffusion SDEs.

3.2 Conditional Flow Matching

Unfortunately, due to the intractable integrals in the definitions of the marginal probability path and VF (equations 6 and 8), it is still intractable to compute , and consequently, intractable to naïvely compute an unbiased estimator of the original Flow Matching objective. Instead, we propose a simpler objective, which surprisingly will result in the same optima as the original objective. Specifically, we consider the Conditional Flow Matching (CFM) objective,

\mathcal{L}_{\scriptscriptstyle\text{CFM}}(\theta)=\mathbb{E}_{t,q(x_{1}),p_{t}(x|x_{1})}\big{\|}v_{t}(x)-u_{t}(x|x_{1})\big{\|}^{2},where , , and now . Unlike the FM objective, the CFM objective allows us to easily sample unbiased estimates as long as we can efficiently sample from and compute , both of which can be easily done as they are defined on a per-sample basis.

Our second key observation is therefore:

The FM (equation 5) and CFM (equation 9) objectives have identical gradients w.r.t. .

That is, optimizing the CFM objective is equivalent (in expectation) to optimizing the FM objective. Consequently, this allows us to train a CNF to generate the marginal probability path —which in particular, approximates the unknown data distribution at = — without ever needing access to either the marginal probability path or the marginal vector field. We simply need to design suitable conditional probability paths and vector fields. We formalize this property in the following theorem.

Theorem 2.

Assuming that for all and , then, up to a constant independent of , and are equal. Hence, .

4 Conditional Probability Paths and Vector Fields

The Conditional Flow Matching objective works with any choice of conditional probability path and conditional vector fields. In this section, we discuss the construction of and for a general family of Gaussian conditional probability paths. Namely, we consider conditional probability paths of the form

where is the time-dependent mean of the Gaussian distribution, while describes a time-dependent scalar standard deviation (std). We set and , so that all conditional probability paths converge to the same standard Gaussian noise distribution at , . We then set and , which is set sufficiently small so that is a concentrated Gaussian distribution centered at .

There is an infinite number of vector fields that generate any particular probability path (e.g., by adding a divergence free component to the continuity equation, see equation 26), but the vast majority of these is due to the presence of components that leave the underlying distribution invariant—for instance, rotational components when the distribution is rotation-invariant—leading to unnecessary extra compute. We decide to use the simplest vector field corresponding to a canonical transformation for Gaussian distributions. Specifically, consider the flow (conditioned on )

When is distributed as a standard Gaussian, is the affine transformation that maps to a normally-distributed random variable with mean and std . That is to say, according to equation 4, pushes the noise distribution to , i.e.,

This flow then provides a vector field that generates the conditional probability path:

Reparameterizing in terms of just and plugging equation 13 in the CFM loss we get

{\mathcal{L}}_{\scriptscriptstyle\text{CFM}}(\theta)=\mathbb{E}_{t,q(x_{1}),p(x_{0})}\Big{\|}v_{t}(\psi_{t}(x_{0}))-\frac{d}{dt}\psi_{t}(x_{0})\Big{\|}^{2}.Since is a simple (invertible) affine map we can use equation 13 to solve for in a closed form. Let denote the derivative with respect to time, i.e., , for a time-dependent function .

Theorem 3.

Let be a Gaussian probability path as in equation 10, and its corresponding flow map as in equation 11. Then, the unique vector field that defines has the form:

Consequently, generates the Gaussian path .

4.1 Special instances of Gaussian conditional probability paths

Our formulation is fully general for arbitrary functions and , and we can set them to any differentiable function satisfying the desired boundary conditions. We first discuss the special cases that recover probability paths corresponding to previously-used diffusion processes. Since we directly work with probability paths, we can simply depart from reasoning about diffusion processes altogether. Therefore, in the second example below, we directly formulate a probability path based on the Wasserstein-2 optimal transport solution as an interesting instance.

Example I: Diffusion conditional VFs.

Diffusion models start with data points and gradually add noise until it approximates pure noise. These can be formulated as stochastic processes, which have strict requirements in order to obtain closed form representation at arbitrary times , resulting in Gaussian conditional probability paths with specific choices of mean and std 15 3 4. For example, the reversed (noise data) Variance Exploding (VE) path has the form

where is an increasing function, , and . Next, equation 16 provides the choices of and . Plugging these into equation 15 of Theorem 3 we get

The reversed (noise data) Variance Preserving (VP) diffusion path has the form

and is the noise scale function. Equation 18 provides the choices of and . Plugging these into equation 15 of Theorem 3 we get

Our construction of the conditional VF does in fact coincide with the vector field previously used in the deterministic probability flow (4, equation 13) when restricted to these conditional diffusion processes; see details in Appendix D. Nevertheless, combining the diffusion conditional VF with the Flow Matching objective offers an attractive training alternative—which we find to be more stable and robust in our experiments—to existing score matching approaches.

Another important observation is that, as these probability paths were previously derived as solutions of diffusion processes, they do not actually reach a true noise distribution in finite time. In practice, is simply approximated by a suitable Gaussian distribution for sampling and likelihood evaluation. Instead, our construction provides full control over the probability path, and we can just directly set and , as we will do next.

t = 0.0 𝑡 t=0.0

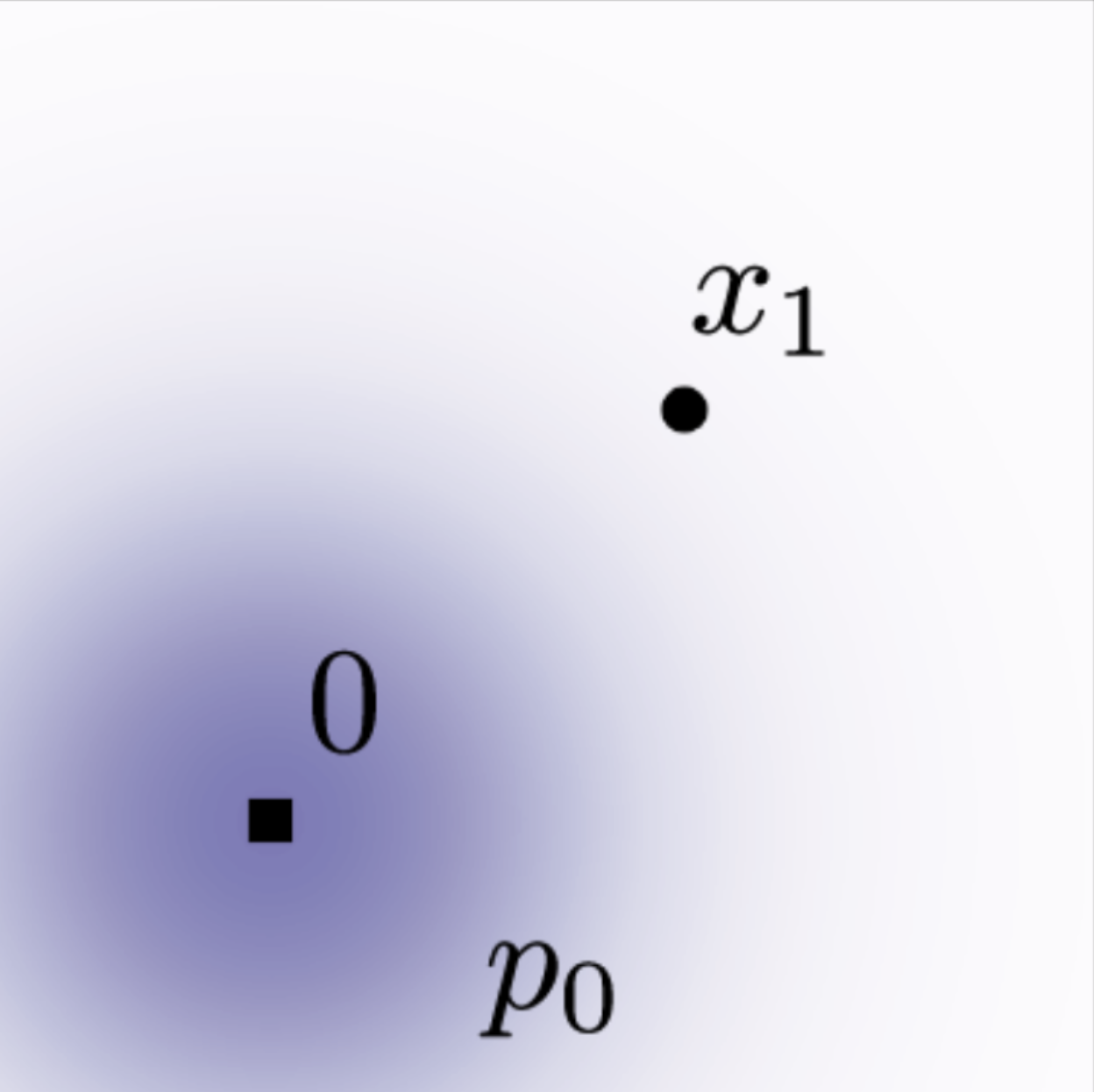

Example II: Optimal Transport conditional VFs.

An arguably more natural choice for conditional probability paths is to define the mean and the std to simply change linearly in time, i.e.,

According to Theorem 3 this path is generated by the VF

which, in contrast to the diffusion conditional VF (equation 19), is defined for all . The conditional flow that corresponds to is

and in this case, the CFM loss (see equations 9, 14) takes the form:

{\mathcal{L}}_{\scriptscriptstyle\text{CFM}}(\theta)=\mathbb{E}_{t,q(x_{1}),p(x_{0})}\Big{\|}v_{t}(\psi_{t}(x_{0}))-\Big{(}x_{1}-(1-\sigma_{\min})x_{0}\Big{)}\Big{\|}^{2}.Allowing the mean and std to change linearly not only leads to simple and intuitive paths, but it is actually also optimal in the following sense. The conditional flow is in fact the Optimal Transport (OT) displacement map between the two Gaussians and . The OT interpolant, which is a probability path, is defined to be (see Definition 1.1 in 13):

where is the OT map pushing to , denotes the identity map, i.e., , and is called the OT displacement map. Example 1.7 in 13 shows, that in our case of two Gaussians where the first is a standard one, the OT displacement map takes the form of equation 22.

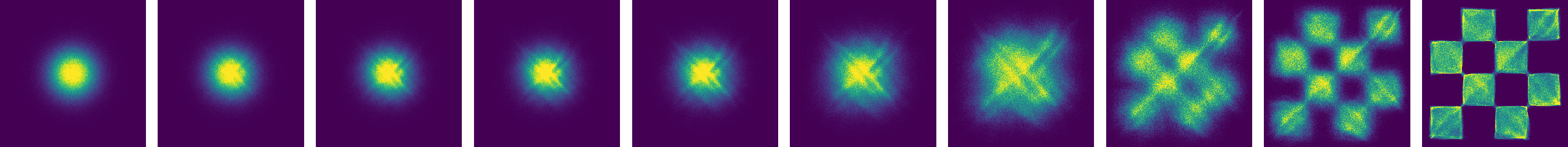

Figure 3: Diffusion and OT trajectories.

Intuitively, particles under the OT displacement map always move in straight line trajectories and with constant speed. Figure 3 depicts sampling paths for the diffusion and OT conditional VFs. Interestingly, we find that sampling trajectory from diffusion paths can “overshoot” the final sample, resulting in unnecessary backtracking, whilst the OT paths are guaranteed to stay straight.

Figure 2 compares the diffusion conditional score function (the regression target in a typical diffusion methods), i.e., with defined as in equation 18, with the OT conditional VF (equation 21). The start () and end () Gaussians are identical in both examples. An interesting observation is that the OT VF has a constant direction in time, which arguably leads to a simpler regression task. This property can also be verified directly from equation 21 as the VF can be written in the form . Figure 8 in the Appendix shows a visualization of the Diffusion VF. Lastly, we note that although the conditional flow is optimal, this by no means imply that the marginal VF is an optimal transport solution. Nevertheless, we expect the marginal vector field to remain relatively simple.

5 Related Work

Continuous Normalizing Flows were introduced in 7 as a continuous-time version of Normalizing Flows (see e.g., 16 17 for an overview). Originally, CNFs are trained with the maximum likelihood objective, but this involves expensive ODE simulations for the forward and backward propagation, resulting in high time complexity due to the sequential nature of ODE simulations. Although some works demonstrated the capability of CNF generative models for image synthesis 10, scaling up to very high dimensional images is inherently difficult. A number of works attempted to regularize the ODE to be easier to solve, e.g., using augmentation 18, adding regularization terms 19 20 21 22 23, or stochastically sampling the integration interval 24. These works merely aim to regularize the ODE but do not change the fundamental training algorithm.

In order to speed up CNF training, some works have developed simulation-free CNF training frameworks by explicitly designing the target probability path and the dynamics. For instance, 11 consider a linear interpolation between the prior and the target density but involves integrals that were difficult to estimate in high dimensions, while 12 consider general probability paths similar to this work but suffers from biased gradients in the stochastic minibatch regime. In contrast, the Flow Matching framework allows simulation-free training with unbiased gradients and readily scales to very high dimensions.

Another approach to simulation-free training relies on the construction of a diffusion process to indirectly define the target probability path 15 3 25. 4 shows that diffusion models are trained using denoising score matching 9, a conditional objective that provides unbiased gradients with respect to the score matching objective. Conditional Flow Matching draws inspiration from this result, but generalizes to matching vector fields directly. Due to the ease of scalability, diffusion models have received increased attention, producing a variety of improvements such as loss-rescaling 8, adding classifier guidance along with architectural improvements 26, and learning the noise schedule 27 28. However, 27 and 28 only consider a restricted setting of Gaussian conditional paths defined by simple diffusion processes with a single parameter—in particular, it does not include our conditional OT path. In an another line of works, 29 30 14 proposed finite time diffusion constructions via diffusion bridges theory resolving the approximation error incurred by infinite time denoising constructions. While existing works make use of a connection between diffusion processes and continuous normalizing flows with the same probability path 31 4 8, our work allows us to generalize beyond the class of probability paths modeled by simple diffusion. With our work, it is possible to completely sidestep the diffusion process construction and reason directly with probability paths, while still retaining efficient training and log-likelihood evaluations. Lastly, concurrently to our work 32 33 arrived at similar conditional objectives for simulation-free training of CNFs, while 34 derived an implicit objective when is assumed to be a gradient field.

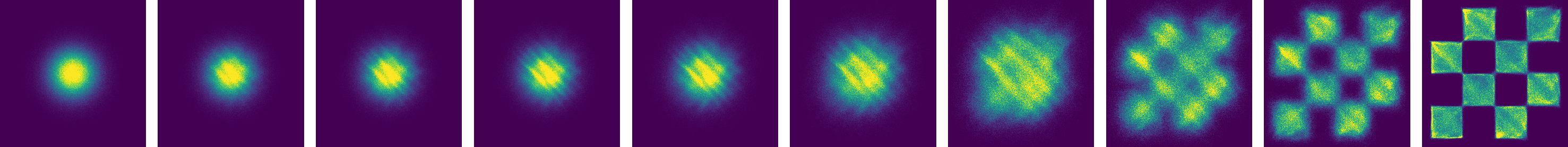

Figure 4: ( left ) Trajectories of CNFs trained with different objectives on 2D checkerboard data. The OT path introduces the checkerboard pattern much earlier, while FM results in more stable training. ( right ) FM with OT results in more efficient sampling, solved using the midpoint scheme.

6 Experiments

We explore the empirical benefits of using Flow Matching on the image datasets of CIFAR-10 35 and ImageNet at resolutions 32, 64, and 128 36 37. We also ablate the choice of diffusion path in Flow Matching, particularly between the standard variance preserving diffusion path and the optimal transport path. We discuss how sample generation is improved by directly parameterizing the generating vector field and using the Flow Matching objective. Lastly we show Flow Matching can also be used in the conditional generation setting. Unless otherwise specified, we evaluate likelihood and samples from the model using dopri5 38 at absolute and relative tolerances of 1e-5. Generated samples can be found in the Appendix, and all implementation details are in Appendix E.

| CIFAR-10 | ImageNet 32 32 | ImageNet 64 64 | |||||||||

| Model | NLL | FID | NFE | NLL | FID | NFE | NLL | FID | NFE | ||

| Ablations | |||||||||||

| DDPM | 3.12 | 7.48 | 274 | 3.54 | 6.99 | 262 | 3.32 | 17.36 | 264 | ||

| Score Matching | 3.16 | 19.94 | 242 | 3.56 | 5.68 | 178 | 3.40 | 19.74 | 441 | ||

| ScoreFlow | 3.09 | 20.78 | 428 | 3.55 | 14.14 | 195 | 3.36 | 24.95 | 601 | ||

| Ours | |||||||||||

| FM w / Diffusion | 3.10 | 8.06 | 183 | 3.54 | 6.37 | 193 | 3.33 | 16.88 | 187 | ||

| FM w / OT | 2.99 | 6.35 | 142 | 3.53 | 5.02 | 122 | 3.31 | 14.45 | 138 | ||

| ImageNet 128 128 | ||

|---|---|---|

| Model | NLL | FID |

| MGAN 19 | – | 58.9 |

| PacGAN2 25 | – | 57.5 |

| Logo-GAN-AE 39 | – | 50.9 |

| Self-cond. GAN 27 | – | 41.7 |

| Uncond. BigGAN 27 | – | 25.3 |

| PGMGAN 3 | – | 21.7 |

| FM w / OT | 2.90 | 20.9 |

Table 1: Likelihood (BPD), quality of generated samples (FID), and evaluation time (NFE) for the same model trained with different methods.

6.1 Density Modeling and Sample Quality on ImageNet

We start by comparing the same model architecture, i.e., the U-Net architecture from 26 with minimal changes, trained on CIFAR-10, and ImageNet 32/64 with different popular diffusion-based losses: DDPM from 3, Score Matching (SM) 4, and Score Flow (SF) 8; see Appendix E.1 for exact details. Table 1 (left) summarizes our results alongside these baselines reporting negative log-likelihood (NLL) in units of bits per dimension (BPD), sample quality as measured by the Frechet Inception Distance (FID; 39), and averaged number of function evaluations (NFE) required for the adaptive solver to reach its a prespecified numerical tolerance, averaged over 50k samples. All models are trained using the same architecture, hyperparameter values and number of training iterations, where baselines are allowed more iterations for better convergence. Note that these are unconditional models. On both CIFAR-10 and ImageNet, FM-OT consistently obtains best results across all our quantitative measures compared to competing methods. We are noticing a higher that usual FID performance in CIFAR-10 compared to previous works 3 4 8 that can possibly be explained by the fact that our used architecture was not optimized for CIFAR-10.

Secondly, Table 1 (right) compares a model trained using Flow Matching with the OT path on ImageNet at resolution 128 128. Our FID is state-of-the-art with the exception of IC-GAN 40 which uses conditioning with a self-supervised ResNet50 model, and therefore is left out of this table. Figures 11, 12, 13 in the Appendix show non-curated samples from these models.

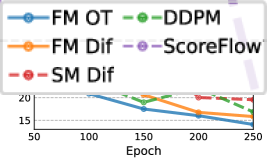

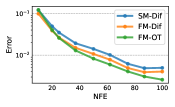

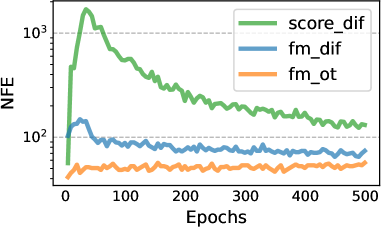

Figure 5: Image quality during training, ImageNet 64 × \times 64.

Faster training. While existing works train diffusion models with a very high number of iterations (e.g., 1.3m and 10m iterations are reported by Score Flow and VDM, respectively), we find that Flow Matching generally converges much faster. Figure 5 shows FID curves during training of Flow Matching and all baselines for ImageNet 64 64; FM-OT is able to lower the FID faster and to a greater extent than the alternatives. For ImageNet-128 26 train for 4.36m iterations with batch size 256, while FM (with 25% larger model) used 500k iterations with batch size 1.5k, i.e., 33% less image throughput; see Table 3 for exact details. Furthermore, the cost of sampling from a model can drastically change during training for score matching, whereas the sampling cost stays constant when training with Flow Matching (Figure 10 in Appendix).

6.2 Sampling Efficiency

Score Matching w/ Diffusion

For sampling, we first draw a random noise sample then compute by solving equation 1 with the trained VF, , on the interval using an ODE solver. While diffusion models can also be sampled through an SDE formulation, this can be highly inefficient and many methods that propose fast samplers (e.g., 5 6) directly make use of the ODE perspective (see Appendix D). In part, this is due to ODE solvers being much more efficient—yielding lower error at similar computational costs 41 —and the multitude of available ODE solver schemes. When compared to our ablation models, we find that models trained using Flow Matching with the OT path always result in the most efficient sampler, regardless of ODE solver, as demonstrated next.

Sample paths. We first qualitatively visualize the difference in sampling paths between diffusion and OT. Figure 6 shows samples from ImageNet-64 models using identical random seeds, where we find that the OT path model starts generating images sooner than the diffusion path models, where noise dominates the image until the very last time point. We additionally depict the probability density paths in 2D generation of a checkerboard pattern, Figure 4 (left), noticing a similar trend.

Low-cost samples. We next switch to fixed-step solvers and compare low ( 100) NFE samples computed with the ImageNet-32 models from Table 1. In Figure 7 (left), we compare the per-pixel MSE of low NFE solutions compared with 1000 NFE solutions (we use 256 random noise seeds), and notice that the FM with OT model produces the best numerical error, in terms of computational cost, requiring roughly only 60% of the NFEs to reach the same error threshold as diffusion models. Secondly, Figure 7 (right) shows how FID changes as a result of the computational cost, where we find FM with OT is able to achieve decent FID even at very low NFE values, producing better trade-off between sample quality and cost compared to ablated models. Figure 4 (right) shows low-cost sampling effects for the 2D checkerboard experiment.

Figure 7: Flow Matching, especially when using OT paths, allows us to use fewer evaluations for sampling while retaining similar numerical error (left) and sample quality (right). Results are shown for models trained on ImageNet 32 × \times 32, and numerical errors are for the midpoint scheme.

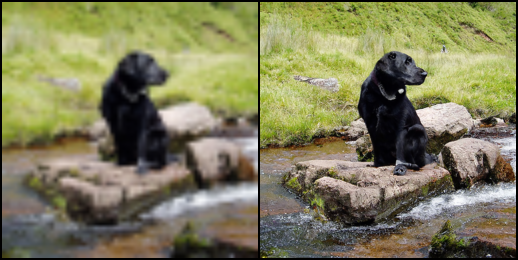

6.3 Conditional sampling from low-resolution images

| Model | FID | IS | PSNR | SSIM |

|---|---|---|---|---|

| Reference | 1.9 | 240.8 | – | – |

| Regression | 15.2 | 121.1 | 27.9 | 0.801 |

| SR3 42 | 5.2 | 180.1 | 26.4 | 0.762 |

| FM w / OT | 3.4 | 200.8 | 24.7 | 0.747 |

Table 2: Image super-resolution on the ImageNet validation set.

Lastly, we experimented with Flow Matching for conditional image generation. In particular, upsampling images from 64 64 to 256 256. We follow the evaluation procedure in 42 and compute the FID of the upsampled validation images; baselines include reference (FID of original validation set), and regression. Results are in Table 2. Upsampled image samples are shown in Figures 14, 15 in the Appendix. FM-OT achieves similar PSNR and SSIM values to 42 while considerably improving on FID and IS, which as argued by 42 is a better indication of generation quality.

7 Conclusion

We introduced Flow Matching, a new simulation-free framework for training Continuous Normalizing Flow models, relying on conditional constructions to effortlessly scale to very high dimensions. Furthermore, the FM framework provides an alternative view on diffusion models, and suggests forsaking the stochastic/diffusion construction in favor of more directly specifying the probability path, allowing us to, e.g., construct paths that allow faster sampling and/or improve generation. We experimentally showed the ease of training and sampling when using the Flow Matching framework, and in the future, we expect FM to open the door to allowing a multitude of probability paths (e.g., non-isotropic Gaussians or more general kernels altogether).

References

Appendix A Theorem Proofs

See 1

Proof.

To verify this, we check that and satisfy the continuity equation (equation 26):

\displaystyle=\int\Big{(}\frac{d}{dt}p_{t}(x|x_{1})\Big{)}q(x_{1})dx_{1}=-\int\mathrm{div}\Big{(}u_{t}(x|x_{1})p_{t}(x|x_{1})\Big{)}q(x_{1})dx_{1} \displaystyle=-\mathrm{div}\Big{(}\int u_{t}(x|x_{1})p_{t}(x|x_{1})q(x_{1})dx_{1}\Big{)}=-\mathrm{div}\Big{(}u_{t}(x)p_{t}(x)\Big{)},where in the second equality we used the fact that generates , in the last equality we used equation 8. Furthermore, the first and third equalities are justified by assuming the integrands satisfy the regularity conditions of the Leibniz Rule (for exchanging integration and differentiation). ∎

See 2

Proof.

To ensure existence of all integrals and to allow the changing of integration order (by Fubini’s Theorem) in the following we assume that and are decreasing to zero at a sufficient speed as , and that are bounded.

First, using the standard bilinearity of the -norm we have that

Next, remember that is independent of and note that

where in the second equality we use equation 6, and in the third equality we change the order of integration. Next,

where in the last equality we change again the order of integration. ∎

See 3

Proof.

For notational simplicity let . Now consider equation 1:

Since is invertible (as ) we let and get

where we used the apostrophe notation for the derivative to emphasis that is evaluated at . Now, inverting provides

Differentiating with respect to gives

Plugging these last two equations in equation 25 we get

as required. ∎

Appendix B The continuity equation

One method of testing if a vector field generates a probability path is the continuity equation 43. It is a Partial Differential Equation (PDE) providing a necessary and sufficient condition to ensuring that a vector field generates ,

where the divergence operator, , is defined with respect to the spatial variable , i.e., .

Appendix C Computing probabilities of the CNF model

We are given an arbitrary data point and need to compute the model probability at that point, i.e., . Below we recap how this can be done covering the basic relevant ODEs, the scaling of the divergence computation, taking into account data transformations (e.g., centering of data), and Bits-Per-Dimension computation.

ODE for computing p1(x1)subscript𝑝1subscript𝑥1p_{1}(x_{1})

The continuity equation with equation 1 lead to the instantaneous change of variable 7 12:

Integrating gives:

Therefore, the log probability can be computed together with the flow trajectory by solving the ODE:

Given initial conditions

the solution is uniquely defined (up to some mild conditions on the VF ). Denote , and according to equation 27,

Now, we are given an arbitrary and want to compute . For this end, we will need to solve equation 28 in reverse. That is,

and we solve this equation for with the initial conditions at :

From uniqueness of ODEs, the solution will be identical to the solution of equation 28 with initial conditions in equation 29 where . This can be seen from equation 30 and setting . Therefore we get that

and consequently

To summarize, to compute we first solve the ODE in equation 31 with initial conditions in equation 32, and the compute equation 33.

Unbiased estimator to p1(x1)subscript𝑝1subscript𝑥1p_{1}(x_{1})

Solving equation 31 requires computation of of VFs in which is costly. 10 suggest to replace the divergence by the (unbiased) Hutchinson trace estimator,

where is a sample from a random variable such that . Solving the ODE in equation 34 exactly (in practice, with a small controlled error) with initial conditions in equation 32 leads to

where in the third equality we switched order of integration assuming the sufficient condition of Fubini’s theorem hold, and in the previous to last equality we used equation 30. Therefore the random variable

is an unbiased estimator for . To summarize, for a scalable unbiased estimation of we first solve the ODE in equation 34 with initial conditions in equation 32, and then output equation 35.

Transformed data

Often, before training our generative model we transform the data, e.g., we scale and/or translate the data. Such a transformation is denoted by and our generative model becomes a composition

where is the model we train. Given a prior probability we have that the push forward of this probability under (equation 3 and equation 4) takes the form

and therefore

For images and we consider a transform that maps each pixel value from to . Therefore,

and

For this case we have

Bits-Per-Dimension (BPD) computation

BPD is defined by

Following equation 36 we get

and is approximated using the unbiased estimator in equation 35 over the transformed data . Averaging the unbiased estimator on a large test test provides a good approximation to the test set BPD.

Appendix D Diffusion conditional vector fields

We derive the vector field governing the Probability Flow ODE (equation 13 in 4) for the VE and VP diffusion paths (equation 18) and note that it coincides with the conditional vector fields we derive using Theorem 3, namely the vector fields defined in equations 16 and 19.

We start with a short primer on how to find a conditional vector field for the probability path described by the Fokker-Planck equation, then instantiate it for the VE and VP probability paths.

Since in the diffusion literature the diffusion process runs from data at time to noise at time , we will need the following lemma to translate the diffusion VFs to our convention of corresponds to noise and corresponds to data:

Lemma 1.

Consider a flow defined by a vector field generating probability density path . Then, the vector field generates the path when initiated from .

Proof.

We use the continuity equation (equation 26):

and therefore generates . ∎

Conditional VFs for Fokker-Planck probability paths

Consider a Stochastic Differential Equation (SDE) of the standard form

with time parameter , drift , diffusion coefficient , and is the Wiener process. The solution to the SDE is a stochastic process, i.e., a continuous time-dependent random variable, the probability density of which, , is characterized by the Fokker-Planck equation:

where represents the Laplace operator (in ), namely , where is the gradient operator (also in ). Rewriting this equation in the form of the continuity equation can be done as follows 44:

\displaystyle\frac{dp_{t}}{dt}=-\mathrm{div}\Big{(}f_{t}p_{t}-\frac{g^{2}}{2}\frac{\nabla p_{t}}{p_{t}}p_{t}\Big{)}=-\mathrm{div}\Big{(}\big{(}f_{t}-\frac{g_{t}^{2}}{2}\nabla\log p_{t}\big{)}p_{t}\Big{)}=-\mathrm{div}\Big{(}w_{t}p_{t}\Big{)}where the vector field

satisfies the continuity equation with the probability path , and therefore generates .

Variance Exploding (VE) path

The SDE for the VE path is

where and increasing to infinity as . The SDE is moving from data, , at to noise, , at with the probability path

The conditional VF according to equation 40 is:

Using Lemma 1 we get that the probability path

is generated by

which coincides with equation 17.

Variance Preserving (VP) path

The SDE for the VP path is

where , . The SDE coefficients are therefore

and

Plugging these choices in equation 40 we get the conditional VF

Using Lemma 1 to reverse the time we get the conditional VF for the reverse probability path:

which coincides with equation 19.

t = 0.0 𝑡 t=0.0

Figure 9: Trajectories of CNFs trained with ScoreFlow 45 and DDPM 18 losses on 2D checkerboard data, using the same learning rate and other hyperparameters as Figure 4.

Appendix E Implementation details

For the 2D example we used an MLP with 5-layers of 512 neurons each, while for images we used the UNet architecture from 26. For images, we center crop images and resize to the appropriate dimension, whereas for the 32 32 and 64 64 resolutions we use the same pre-processing as 36. The three methods (FM-OT, FM-Diffusion, and SM-Diffusion) are always trained on the same architecture, same hyper-parameters, and for the same number of epochs.

E.1 Diffusion baselines

Losses.

We consider three options as diffusion baselines that correspond to the most popular diffusion loss parametrizations 25 8 3 28. We will assume general Gaussian path form of equation 10, i.e.,

Score Matching loss is

Taking corresponds to the original Score Matching (SM) loss from 25, while considering ( is defined below) corresponds to the Score Flow (SF) loss motivated by an NLL upper bound 8; is the learnable score function. DDPM (Noise Matching) loss from 3 (equation 14) is

\displaystyle=\mathbb{E}_{t,q(x_{1}),p_{0}(x_{0})}\Big{\|}{\epsilon_{t}(\sigma_{t}(x_{1})x_{0}+\mu_{t}(x_{1}))-x_{0}}\Big{\|}^{2}where is the standard Gaussian, and is the learnable noise function.

Diffusion path.

For the diffusion path we use the standard VP diffusion (equation 19), namely,

with, as suggested in 4, and consequently

where , and time is sampled in , for training and likelihood and for sampling.

Sampling.

Score matching samples are produced by solving the ODE (equation 1) with the vector field

DDPM samples are computed with equation 46 after setting , where .

E.2 Training & evaluation details

| CIFAR10 | ImageNet-32 | ImageNet-64 | ImageNet-128 | |

|---|---|---|---|---|

| Channels | 256 | 256 | 192 | 256 |

| Depth | 2 | 3 | 3 | 3 |

| Channels multiple | 1,2,2,2 | 1,2,2,2 | 1,2,3,4 | 1,1,2,3,4 |

| Heads | 4 | 4 | 4 | 4 |

| Heads Channels | 64 | 64 | 64 | 64 |

| Attention resolution | 16 | 16,8 | 32,16,8 | 32,16,8 |

| Dropout | 0.0 | 0.0 | 0.0 | 0.0 |

| Effective Batch size | 256 | 1024 | 2048 | 1536 |

| GPUs | 2 | 4 | 16 | 32 |

| Epochs | 1000 | 200 | 250 | 571 |

| Iterations | 391k | 250k | 157k | 500k |

| Learning Rate | 5e-4 | 1e-4 | 1e-4 | 1e-4 |

| Learning Rate Scheduler | Polynomial Decay | Polynomial Decay | Constant | Polynomial Decay |

| Warmup Steps | 45k | 20k | - | 20k |

Table 3: Hyper-parameters used for training each model

We report the hyper-parameters used in Table 3. We use full 32 bit-precision for training CIFAR10 and ImageNet-32 and 16-bit mixed precision for training ImageNet-64/128/256. All models are trained using the Adam optimizer with the following parameters: , , weight decay = 0.0, and . All methods we trained (i.e., FM-OT, FM-Diffusion, SM-Diffusion) using identical architectures, with the same parameters for the the same number of Epochs (see Table 3 for details). We use either a constant learning rate schedule or a polynomial decay schedule (see Table 3). The polynomial decay learning rate schedule includes a warm-up phase for a specified number of training steps. In the warm-up phase, the learning rate is linearly increased from to the peak learning rate (specified in Table 3). Once the peak learning rate is achieved, it linearly decays the learning rate down to until the final training step.

When reporting negative log-likelihood, we dequantize using the standard uniform dequantization. We report an importance-weighted estimate using

with is in {0, …, 255} and solved at with an adaptive step size solver dopri5 with atol=rtol=1e-5 using the torchdiffeq 45 library. Estimated values for different values of are in Table 4.

When computing FID/Inception scores for CIFAR10, ImageNet-32/64 we use the TensorFlow GAN library 2. To remain comparable to 26 for ImageNet-128 we use the evaluation script they include in their publicly available code repository 3.

Appendix F Additional tables and figures

| CIFAR-10 | ImageNet 32 32 | ImageNet 64 64 | |||||||||

| Model | =1 | =20 | =50 | =1 | =5 | =15 | =1 | =5 | =10 | ||

| Ablation | |||||||||||

| DDPM | 3.24 | 3.14 | 3.12 | 3.62 | 3.57 | 3.54 | 3.36 | 3.33 | 3.32 | ||

| Score Matching | 3.28 | 3.18 | 3.16 | 3.65 | 3.59 | 3.57 | 3.43 | 3.41 | 3.40 | ||

| ScoreFlow | 3.21 | 3.11 | 3.09 | 3.63 | 3.57 | 3.55 | 3.39 | 3.37 | 3.36 | ||

| Ours | |||||||||||

| FM w / Diffusion | 3.23 | 3.13 | 3.10 | 3.64 | 3.58 | 3.56 | 3.37 | 3.34 | 3.33 | ||

| FM w / OT | 3.11 | 3.01 | 2.99 | 3.62 | 3.56 | 3.53 | 3.35 | 3.33 | 3.31 | ||

Table 4: Negative log-likelihood (in bits per dimension) on the test set with different values of using uniform dequantization.

Figure 10: Function evaluations for sampling during training, for models trained on CIFAR-10 using dopri5 solver with tolerance 1 e − 5 superscript 𝑒 1e^{-5}.

Figure 11: Non-curated unconditional ImageNet-32 generated images of a CNF trained with FM-OT.

Figure 12: Non-curated unconditional ImageNet-64 generated images of a CNF trained with FM-OT.

Figure 13: Non-curated unconditional ImageNet-128 generated images of a CNF trained with FM-OT.

Figure 14: Conditional generation 64 × \times 64 → \rightarrow 256 256. Flow Matching OT upsampled images from validation set.

Figure 15: Conditional generation 64 × \times 64 → \rightarrow 256 256. Flow Matching OT upsampled images from validation set.

Refer to caption

Refer to caption

Footnotes

-

Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, and Mark Chen. Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125, 2022. ↩

-

Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Björn Ommer. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 10684–10695, 2022. ↩

-

Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. Advances in Neural Information Processing Systems, 33:6840–6851, 2020. ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7

-

Yang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Abhishek Kumar, Stefano Ermon, and Ben Poole. Score-based generative modeling through stochastic differential equations. arXiv preprint arXiv:2011.13456, 2020b. ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8 ↩9

-

Jiaming Song, Chenlin Meng, and Stefano Ermon. Denoising diffusion implicit models. arXiv preprint arXiv:2010.02502, 2020a. ↩ ↩2

-

Qinsheng Zhang and Yongxin Chen. Fast sampling of diffusion models with exponential integrator. arXiv preprint arXiv:2204.13902, 2022. ↩ ↩2

-

Ricky T. Q. Chen, Yulia Rubanova, Jesse Bettencourt, and David K Duvenaud. Neural ordinary differential equations. Advances in neural information processing systems, 31, 2018. ↩ ↩2 ↩3 ↩4

-

Yang Song, Conor Durkan, Iain Murray, and Stefano Ermon. Maximum likelihood training of score-based diffusion models. In Thirty-Fifth Conference on Neural Information Processing Systems, 2021. ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7

-

Pascal Vincent. A connection between score matching and denoising autoencoders. Neural computation, 23(7):1661–1674, 2011. ↩ ↩2

-

Will Grathwohl, Ricky T. Q. Chen, Jesse Bettencourt, Ilya Sutskever, and David Duvenaud. Ffjord: Free-form continuous dynamics for scalable reversible generative models, 2018. URL https://arxiv.org/abs/1810.01367. ↩ ↩2 ↩3

-

Noam Rozen, Aditya Grover, Maximilian Nickel, and Yaron Lipman. Moser flow: Divergence-based generative modeling on manifolds. In A. Beygelzimer, Y. Dauphin, P. Liang, and J. Wortman Vaughan (eds.), Advances in Neural Information Processing Systems, 2021. URL https://openreview.net/forum?id=qGvMv3undNJ. ↩ ↩2

-

Heli Ben-Hamu, Samuel Cohen, Joey Bose, Brandon Amos, Aditya Grover, Maximilian Nickel, Ricky T. Q. Chen, and Yaron Lipman. Matching normalizing flows and probability paths on manifolds. arXiv preprint arXiv:2207.04711, 2022. ↩ ↩2 ↩3

-

Robert J McCann. A convexity principle for interacting gases. Advances in mathematics, 128(1):153–179, 1997. ↩ ↩2 ↩3

-

Stefano Peluchetti. Non-denoising forward-time diffusions. 2021. ↩ ↩2

-

Jascha Sohl-Dickstein, Eric Weiss, Niru Maheswaranathan, and Surya Ganguli. Deep unsupervised learning using nonequilibrium thermodynamics. In International Conference on Machine Learning, pp. 2256–2265. PMLR, 2015. ↩ ↩2

-

Ivan Kobyzev, Simon JD Prince, and Marcus A Brubaker. Normalizing flows: An introduction and review of current methods. IEEE transactions on pattern analysis and machine intelligence, 43(11):3964–3979, 2020. ↩

-

George Papamakarios, Eric T Nalisnick, Danilo Jimenez Rezende, Shakir Mohamed, and Balaji Lakshminarayanan. Normalizing flows for probabilistic modeling and inference. J. Mach. Learn. Res., 22(57):1–64, 2021. ↩

-

Emilien Dupont, Arnaud Doucet, and Yee Whye Teh. Augmented neural odes. In H. Wallach, H. Larochelle, A. Beygelzimer, F. d´ Alché-Buc, E. Fox, and R. Garnett (eds.), Advances in Neural Information Processing Systems, volume 32. Curran Associates, Inc., 2019. URL https://proceedings.neurips.cc/paper/2019/file/21be9a4bd4f81549a9d1d241981cec3c-Paper.pdf. ↩

-

Liu Yang and George E. Karniadakis. Potential flow generator with optimal transport regularity for generative models. CoRR, abs/1908.11462, 2019. URL http://arxiv.org/abs/1908.11462. ↩

-

Chris Finlay, Jörn-Henrik Jacobsen, Levon Nurbekyan, and Adam M. Oberman. How to train your neural ode: the world of jacobian and kinetic regularization. In ICML, pp. 3154–3164, 2020. URL http://proceedings.mlr.press/v119/finlay20a.html. ↩

-

Derek Onken, Samy Wu Fung, Xingjian Li, and Lars Ruthotto. Ot-flow: Fast and accurate continuous normalizing flows via optimal transport. Proceedings of the AAAI Conference on Artificial Intelligence, 35(10):9223–9232, May 2021. URL https://ojs.aaai.org/index.php/AAAI/article/view/17113. ↩

-

Alexander Tong, Jessie Huang, Guy Wolf, David Van Dijk, and Smita Krishnaswamy. Trajectorynet: A dynamic optimal transport network for modeling cellular dynamics. In International conference on machine learning, pp. 9526–9536. PMLR, 2020. ↩

-

Jacob Kelly, Jesse Bettencourt, Matthew J Johnson, and David K Duvenaud. Learning differential equations that are easy to solve. Advances in Neural Information Processing Systems, 33:4370–4380, 2020. ↩

-

Shian Du, Yihong Luo, Wei Chen, Jian Xu, and Delu Zeng. To-flow: Efficient continuous normalizing flows with temporal optimization adjoint with moving speed, 2022. URL https://arxiv.org/abs/2203.10335. ↩

-

Yang Song and Stefano Ermon. Generative modeling by estimating gradients of the data distribution. In H. Wallach, H. Larochelle, A. Beygelzimer, F. d´ Alché-Buc, E. Fox, and R. Garnett (eds.), Advances in Neural Information Processing Systems, volume 32. Curran Associates, Inc., 2019. URL https://proceedings.neurips.cc/paper/2019/file/3001ef257407d5a371a96dcd947c7d93-Paper.pdf. ↩ ↩2 ↩3

-

Prafulla Dhariwal and Alexander Quinn Nichol. Diffusion models beat GANs on image synthesis. In A. Beygelzimer, Y. Dauphin, P. Liang, and J. Wortman Vaughan (eds.), Advances in Neural Information Processing Systems, 2021. URL https://openreview.net/forum?id=AAWuCvzaVt. ↩ ↩2 ↩3 ↩4 ↩5

-

Alexander Quinn Nichol and Prafulla Dhariwal. Improved denoising diffusion probabilistic models. In International Conference on Machine Learning, pp. 8162–8171. PMLR, 2021. ↩ ↩2

-

Diederik P Kingma, Tim Salimans, Ben Poole, and Jonathan Ho. Variational diffusion models. In A. Beygelzimer, Y. Dauphin, P. Liang, and J. Wortman Vaughan (eds.), Advances in Neural Information Processing Systems, 2021. URL https://openreview.net/forum?id=2LdBqxc1Yv. ↩ ↩2 ↩3

-

Valentin De Bortoli, James Thornton, Jeremy Heng, and Arnaud Doucet. Diffusion schrödinger bridge with applications to score-based generative modeling. (arXiv:2106.01357), Dec 2021. doi: 10.48550/arXiv.2106.01357. URL http://arxiv.org/abs/2106.01357. arXiv:2106.01357 [cs, math, stat]. ↩

-

Gefei Wang, Yuling Jiao, Qian Xu, Yang Wang, and Can Yang. Deep generative learning via schrödinger bridge. (arXiv:2106.10410), Jul 2021. doi: 10.48550/arXiv.2106.10410. URL http://arxiv.org/abs/2106.10410. arXiv:2106.10410 [cs]. ↩

-

Dimitra Maoutsa, Sebastian Reich, and Manfred Opper. Interacting particle solutions of fokker–planck equations through gradient–log–density estimation. Entropy, 22(8):802, 2020b. ↩

-

Xingchao Liu, Chengyue Gong, and Qiang Liu. Flow straight and fast: Learning to generate and transfer data with rectified flow. arXiv preprint arXiv:2209.03003, 2022. ↩

-

Michael S Albergo and Eric Vanden-Eijnden. Building normalizing flows with stochastic interpolants. arXiv preprint arXiv:2209.15571, 2022. ↩

-

Kirill Neklyudov, Daniel Severo, and Alireza Makhzani. Action matching: A variational method for learning stochastic dynamics from samples, 2023. URL https://openreview.net/forum?id=T6HPzkhaKeS. ↩

-

Alex Krizhevsky, Geoffrey Hinton, et al. Learning multiple layers of features from tiny images. 2009. ↩

-

Patryk Chrabaszcz, Ilya Loshchilov, and Frank Hutter. A downsampled variant of imagenet as an alternative to the cifar datasets. arXiv preprint arXiv:1707.08819, 2017. ↩ ↩2

-

Jia Deng, Wei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. Imagenet: A large-scale hierarchical image database. In 2009 IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255, 2009. doi: 10.1109/CVPR.2009.5206848. ↩

-

John R Dormand and Peter J Prince. A family of embedded runge-kutta formulae. Journal of computational and applied mathematics, 6(1):19–26, 1980. ↩

-

Martin Heusel, Hubert Ramsauer, Thomas Unterthiner, Bernhard Nessler, and Sepp Hochreiter. Gans trained by a two time-scale update rule converge to a local nash equilibrium. Advances in neural information processing systems, 30, 2017. ↩

-

Arantxa Casanova, Marlene Careil, Jakob Verbeek, Michal Drozdzal, and Adriana Romero Soriano. Instance-conditioned gan. Advances in Neural Information Processing Systems, 34:27517–27529, 2021. ↩

-

Peter Eris Kloeden, Eckhard Platen, and Henri Schurz. Numerical solution of SDE through computer experiments. Springer Science & Business Media, 2012. ↩

-

Chitwan Saharia, Jonathan Ho, William Chan, Tim Salimans, David J Fleet, and Mohammad Norouzi. Image super-resolution via iterative refinement. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022. ↩ ↩2 ↩3 ↩4

-

Cédric Villani. Optimal transport: old and new, volume 338. Springer, 2009. ↩

-

Dimitra Maoutsa, Sebastian Reich, and Manfred Opper. Interacting particle solutions of fokker–planck equations through gradient–log–density estimation. Entropy, 22(8):802, jul 2020a. doi: 10.3390/e22080802. URL https://doi.org/10.3390%2Fe22080802. ↩

-

Ricky T. Q. Chen. torchdiffeq, 2018. URL https://github.com/rtqichen/torchdiffeq. ↩